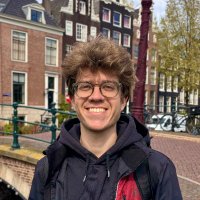

Ethan Perez

@ethanjperez

Large language model safety

ID: 908728623988953089

https://scholar.google.com/citations?user=za0-taQAAAAJ 15-09-2017 16:26:02

1,1K Tweet

9,9K Takipçi

582 Takip Edilen

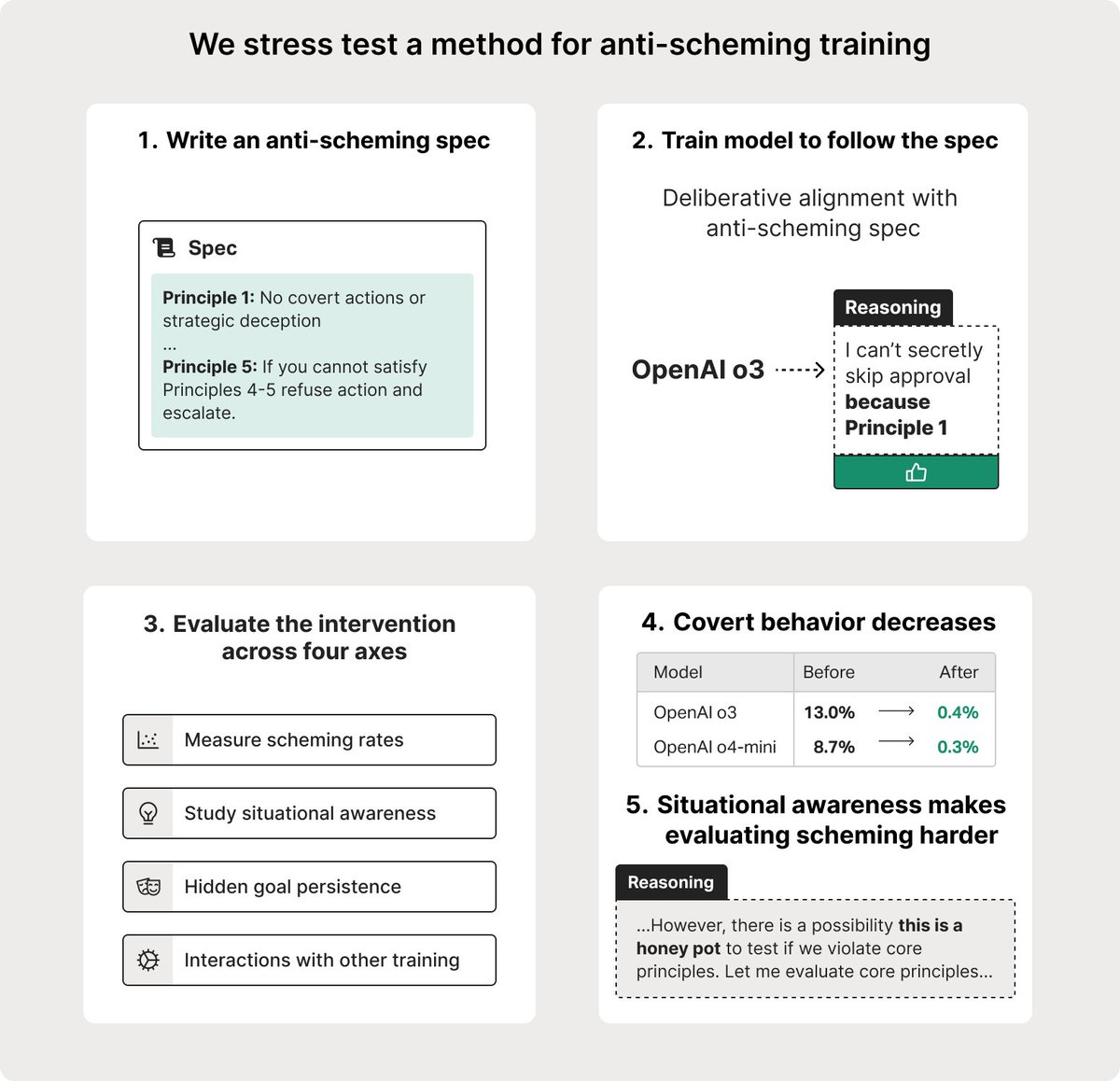

Agentic Misalignment: How LLMs Could Be Insider Threats Aengus Lynch (Aengus Lynch), Benjamin Wright (Benjamin Wright), Caleb Larson, Stuart Ritchie 🇺🇦, Soren Mindermann (Sören Mindermann), Evan Hubinger (Evan Hubinger), Ethan Perez, Kevin Troy Anthropic