Walter Hugo Lopez Pinaya 🍍

@warvito

Senior Research Engineer @synthesiaIO | Ex-Research Fellow @KingsCollegeLon

Text-to-Video | Generative Models | Medical Imaging

ID: 81100667

09-10-2009 12:49:37

2,2K Tweet

987 Followers

544 Following

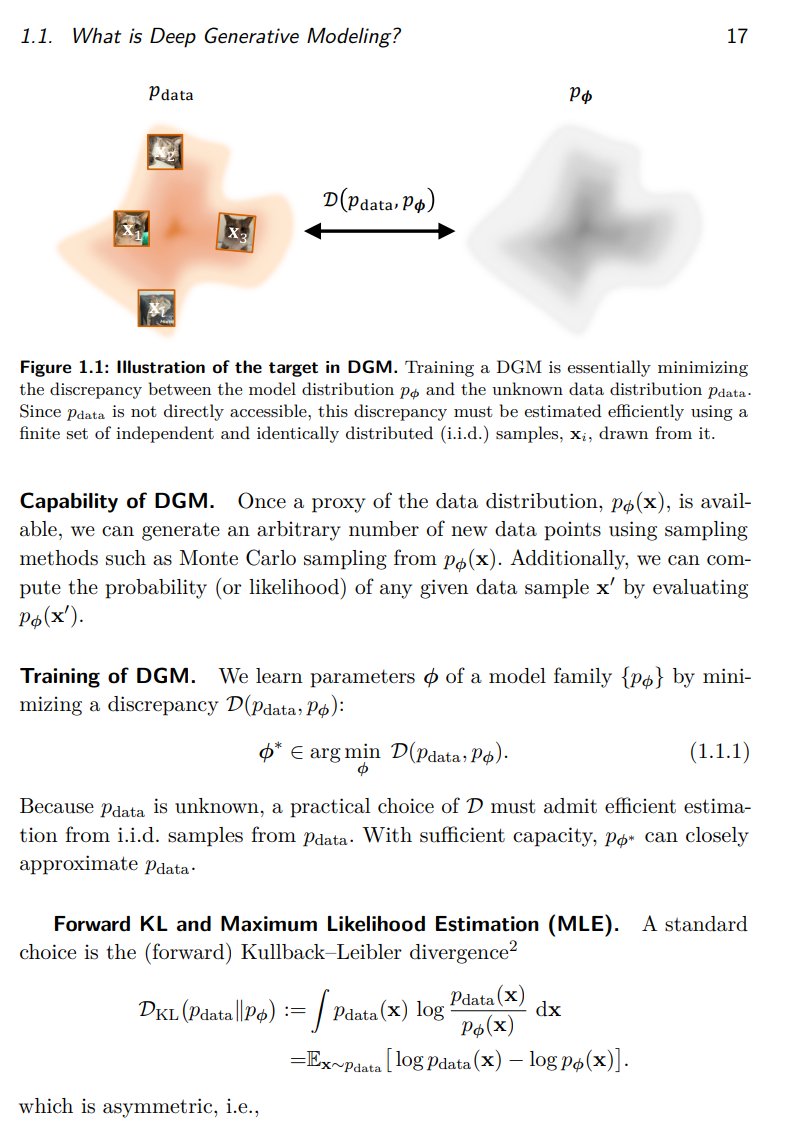

Tired to go back to the original papers again and again? Our monograph: a systematic and fundamental recipe you can rely on! 📘 We’re excited to release 《The Principles of Diffusion Models》— with Yang Song, Dongjun Kim, Yuki Mitsufuji, and Stefano Ermon. It traces the core

This is a phenomenal video by Jia-Bin Huang explaining seminal papers in computer vision, including CLIP, SimCLR, DINO v1/v2/v3 in 15 minutes DINO is actually a brilliant idea, I found the decision of 65k neurons in the output head pretty interesting

![fly51fly (@fly51fly) on Twitter photo [CV] Accelerating Vision Transformers with Adaptive Patch Sizes

R Choudhury, J Kim, J Park, E Yang... [CMU & KAIST] (2025)

arxiv.org/abs/2510.18091 [CV] Accelerating Vision Transformers with Adaptive Patch Sizes

R Choudhury, J Kim, J Park, E Yang... [CMU & KAIST] (2025)

arxiv.org/abs/2510.18091](https://pbs.twimg.com/media/G4I5bJAWUAAA9kz.jpg)