Shreshth Malik

@shreshthmalik

Machine Learning PhD student @OATML_Oxford @aims_oxford

ID: 1108087111822004229

http://shreshth.me 19-03-2019 19:25:29

21 Tweet

241 Followers

714 Following

Congratulations to charig yang who won best presentation and @jacksonmattt @shreshthmalik for best poster at the Joint CDT student conference with Bristol, Lincoln and Edinburgh CDTs Autonomous Intelligent Machines & Systems @Oxford University of Oxford

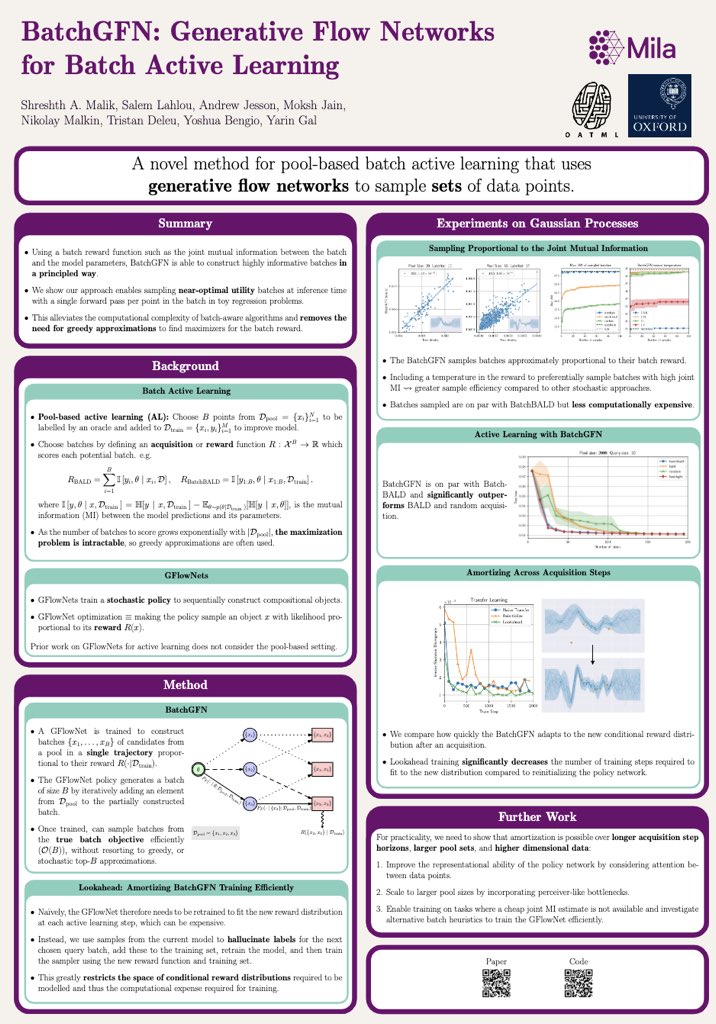

at #icml today? come chat with us about a new method to sample batches for active learning! SPGIM: spigmworkshop.github.io Poster Session: 12-1pm and 4-5pm 📄 arxiv.org/abs/2306.15058 with Shreshth Malik Salem Lahlou Moksh Jain Kolya Malkin Tristan Deleu Yoshua Bengio Yarin

Happy to share that I'll be helping the UK taskforce as director of research (together with David Krueger). We're heavily recruiting - if you have technical expertise and want to work on Frontier Models (LLMs, generative AI), please read here x.com/soundboy/statu…

We’re organising a virtual open day! 📅 4-5:30 PM GMT on 22 Nov 2023 Anyone interested in joining OATML_Oxford 🥣🌾for a PhD is welcome! Come to meet OATMLers, get a feel for our group, and ask any questions you may have! ✏️ Sign Up: forms.gle/MSMwp3wvUR9Cnc…

We're looking for 2 postdocs to join OATML_Oxford, working on Foundational AI Safety. You’ll lead and contribute to projects aimed at developing principled and practical safe AI methods which would be used in real systems eg in the medical domain. Closing date 12th June Links>>

After thousands of microscope hours and several years of painstaking optimisation, Callie Glynn and I are very excited to share our latest manuscript. We outline our approach to investigate the molecular organisation within hippocampus tissue using cryo-ET.

I'm at #ICCV2025 and will be giving a keynote talk tomorrow at the SaFeMM-AI Workshop @ ICCV25 workshop at 10:55 (room 308 B) Drop by to say hi! I'm also looking for PhD students and postdocs to join OATML_Oxford - happy to chat during the conference. Email: [email protected]