Patrick Yin

@patrickhyin

phd @uwcse, undergrad @berkeleyai

ID: 1664673670051270657

http://patrickyin.me 02-06-2023 16:41:55

16 Tweet

101 Followers

141 Following

So you want to do robotics tasks requiring dynamics information in the real world, but you don’t want the pain of real-world RL? In our work to be presented as an oral at ICLR 2024, Marius Memmel showed how we can do this via a real-to-sim-to-real policy learning approach. A 🧵 (1/7)

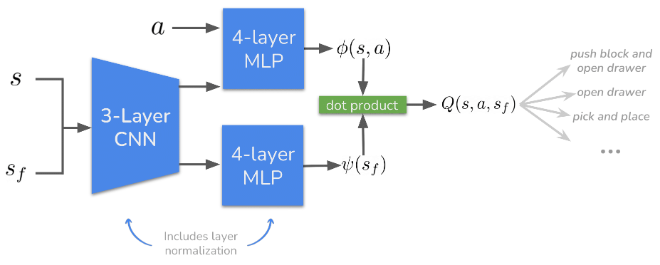

How can we train RL agents that transfer to any reward? In our NeurIPS Conference paper DiSPO, we propose to learn the distribution of successor features of a stationary dataset, which enables zero-shot transfer to arbitrary rewards without additional training! A thread 🧵(1/9)