Jiahui Gao

@jiahuigao3

ID: 1022503292792799232

26-07-2018 15:25:56

41 Tweet

262 Followers

395 Following

Come to play chess with our diffusion reasoning model here: lichess.org/@/diffusearchv0 by Jiacheng Ye ! Check out our research on diffusion reasoning models (DREAMs) here: ikekonglp.github.io/dreams.html to learn how our discrete diffusion approach enables implicit search capabilities!

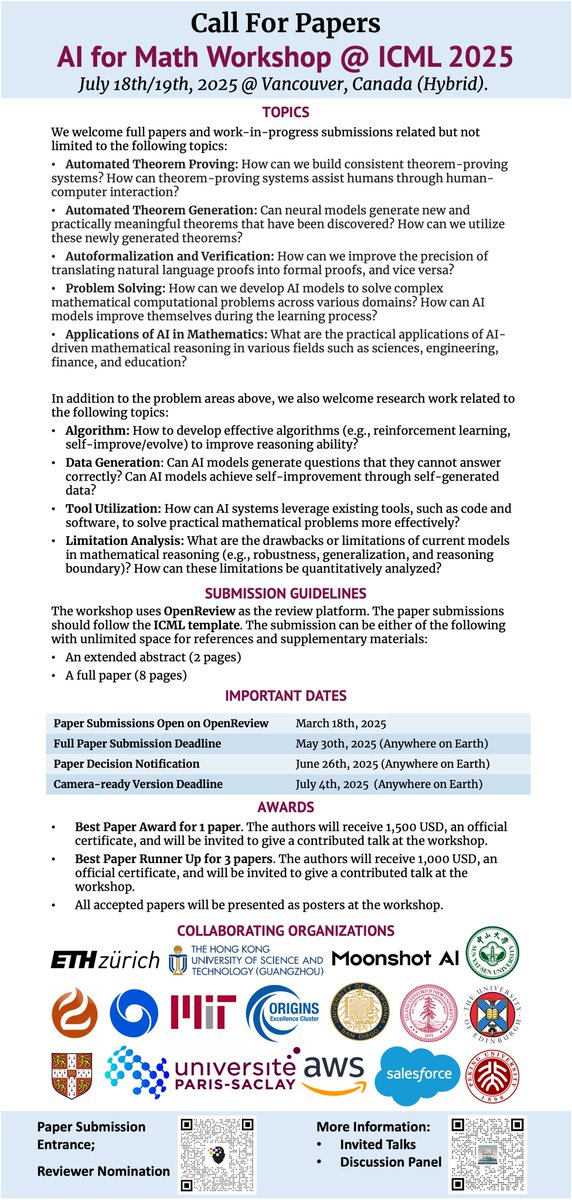

📣🔊 Excited to announce the 2nd AI for Math Workshop at #ICML2025 ICML Conference! 🔍 Workshop details: sites.google.com/view/ai4mathwo… 📜 Submit your pioneering work: sites.google.com/view/ai4mathwo…… 🙋 Reviewer nomination: goo.su/UlL3GJ

![Chuanyang Jin (@chuanyang_jin) on Twitter photo How to achieve human-level open-ended machine Theory of Mind?

Introducing #AutoToM: a fully automated and open-ended ToM reasoning method combining the flexibility of LLMs with the robustness of Bayesian inverse planning, achieving SOTA results across five benchmarks. 🧵[1/n] How to achieve human-level open-ended machine Theory of Mind?

Introducing #AutoToM: a fully automated and open-ended ToM reasoning method combining the flexibility of LLMs with the robustness of Bayesian inverse planning, achieving SOTA results across five benchmarks. 🧵[1/n]](https://pbs.twimg.com/media/GksB2wuXQAAT9I9.jpg)