Thomas Chaton

@chaton_thomas

Research Enginering Manager at @PyTorchLightnin | @gridai_

ID: 1262371033480400899

18-05-2020 13:14:50

91 Tweet

112 Followers

22 Following

🚀 Excited to launch HPU training in partnership with Habana Labs! Every ⚡New Handle: @LightningAI ⚡ model already supports it! Trainer(accelerator=“hpu”) 🤯 🤯

A big shout out to everyone contributing to the development of PyTorch. PyTorch PyTorch Live ⚡New Handle: @LightningAI ⚡ Ryan O'Connor I borrowed from your article. assemblyai.com/blog/pytorch-v…

Amazing PyTorch-lightning tutorials with code by Phillip Lippe. Tutorials cover many deep learning topics like transformers, energy-based models, GNNs, and more! We recommend the tutorials for everyone starting their DL journey: pytorch-lightning.readthedocs.io/en/latest/

Would you like to be able to add a Panel #dataapp to your ⚡New Handle: @LightningAI ⚡ App? Check out my Live App: …xzp3npc9cr2dmthh.litng-ai-03.litng.ai/view/Home Repo: github.com/marcskovmadsen… PR to Lightning Docs: github.com/Lightning-AI/l… PyTorch #python #machinelearning #deeplearning

What if you could launch new products and AI startups in only a few days with Lightning AI ⚡️ and our new apps framework! This Lightning app for stable diffusion took 2 weeks to build with 2 engs. Shout out to Emad and Stability AI for providing awesome opensource models

Prepare a 1 trillion token dataset to train LLMs from scratch in under 4 hours instead of days with Lightning AI ⚡️ Studio! Everything is included, the final datasets, the code, dependencies, etc... Get started in seconds as no setup is needed. lightning.ai/lightning-ai/s…

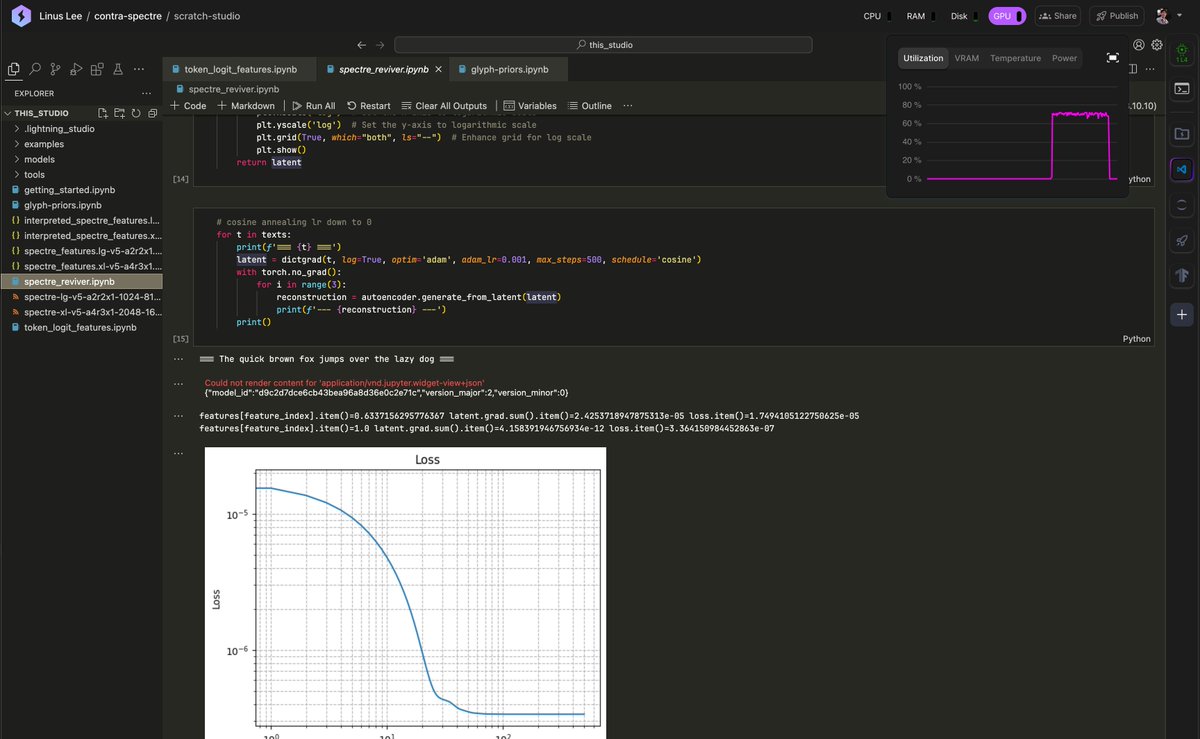

A while ago I complained here about persistent storage in Google Colab. Have been using Lightning AI ⚡️ Studios for a while now for: - Full VSCode (incl. GH Copilot) - Persisted files shared across notebooks - Multi-GPU/node (!!) It's been great. Feels like a remote ML workstation

Here I show you how to finetune and deploy DeepSeek R1 (8B) for < $1.00 in 8 minutes using the AI Hub from Lightning AI ⚡️ ⚡️⚡️