Harry

@categorified

Beauty is truth, truth beauty,—that is all ye know on earth, and all ye need to know.

ID: 1720097875341000705

02-11-2023 15:17:55

17 Tweet

5 Followers

103 Following

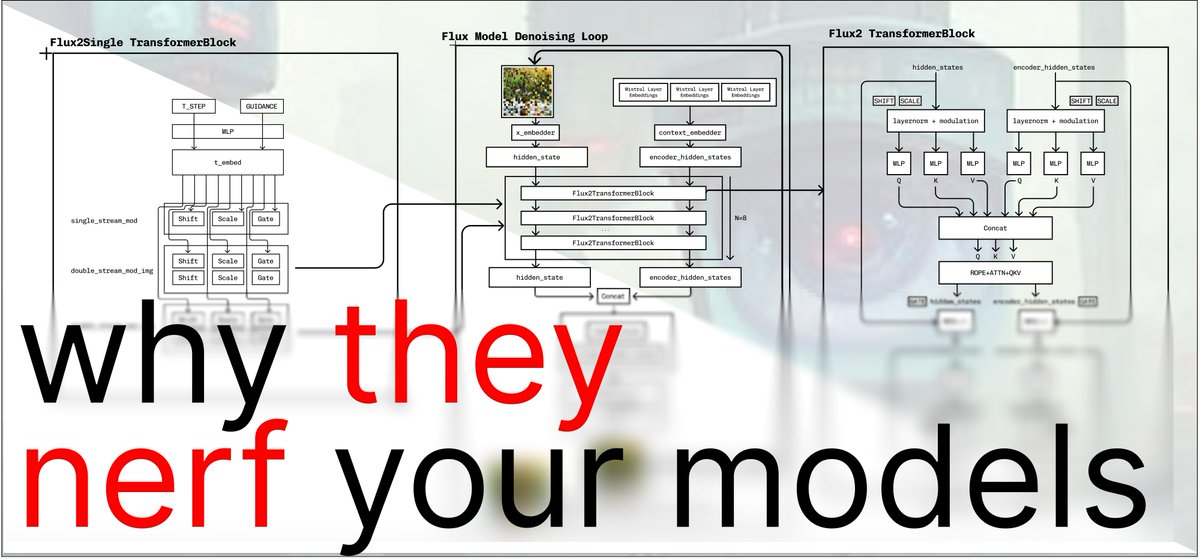

LLMs are amnesiacs. Once context fills up, they forget everything. To fight this means grappling with a core question: how do you update a neural network without breaking what it already knows? In this piece, Charlie O'Neill and Harry Partridge argue that continual learning is