Aryan kolapkar (e/acc)

@aryankolapkar

IITB '23 | AI builder | I use twitter as my note keeping app

text2shorts.com

thescript.ink

ID: 4516353076

https://text2shorts.com 17-12-2015 18:03:48

740 Tweet

37 Followers

368 Following

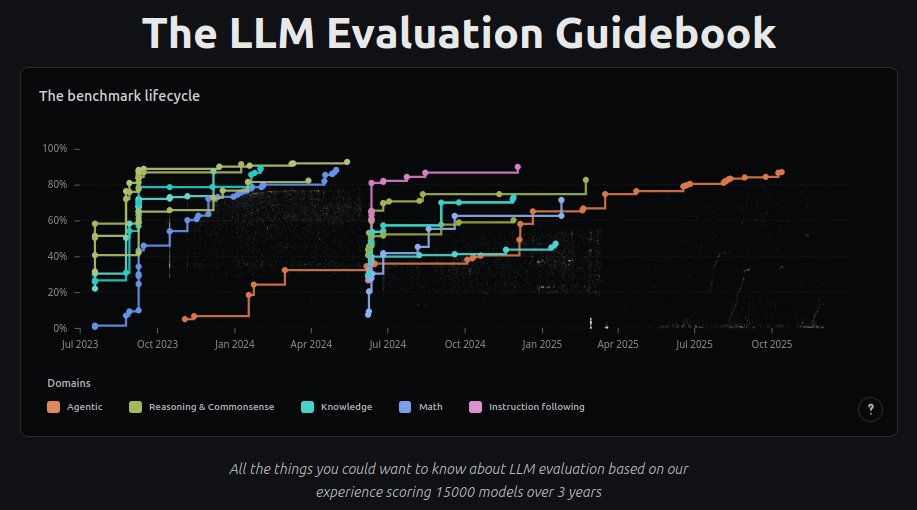

Hey twitter! I'm releasing the LLM Evaluation Guidebook v2! Updated, nicer to read, interactive graphics, etc! huggingface.co/spaces/OpenEva… After this, I'm off: I'm taking a sabbatical to go hike with my dogs :D (back Hugging Face in Dec *2026*) See you all next year!