Deniz Yuret

@denizyuret

@kuisaicenter founding director

ID: 2412037924

http://www.denizyuret.com 15-03-2014 06:43:50

408 Tweet

3,3K Takipçi

211 Takip Edilen

Check out our new preprint on the connections between machine learning and nonequilibrium physics: arxiv.org/abs/2306.03521. Shishir Adhikari started this as his last PhD work, and it has grown into a fun collaboration with Alkan Kabakcioglu, Alex Strang, and Deniz Yuret (1/n)

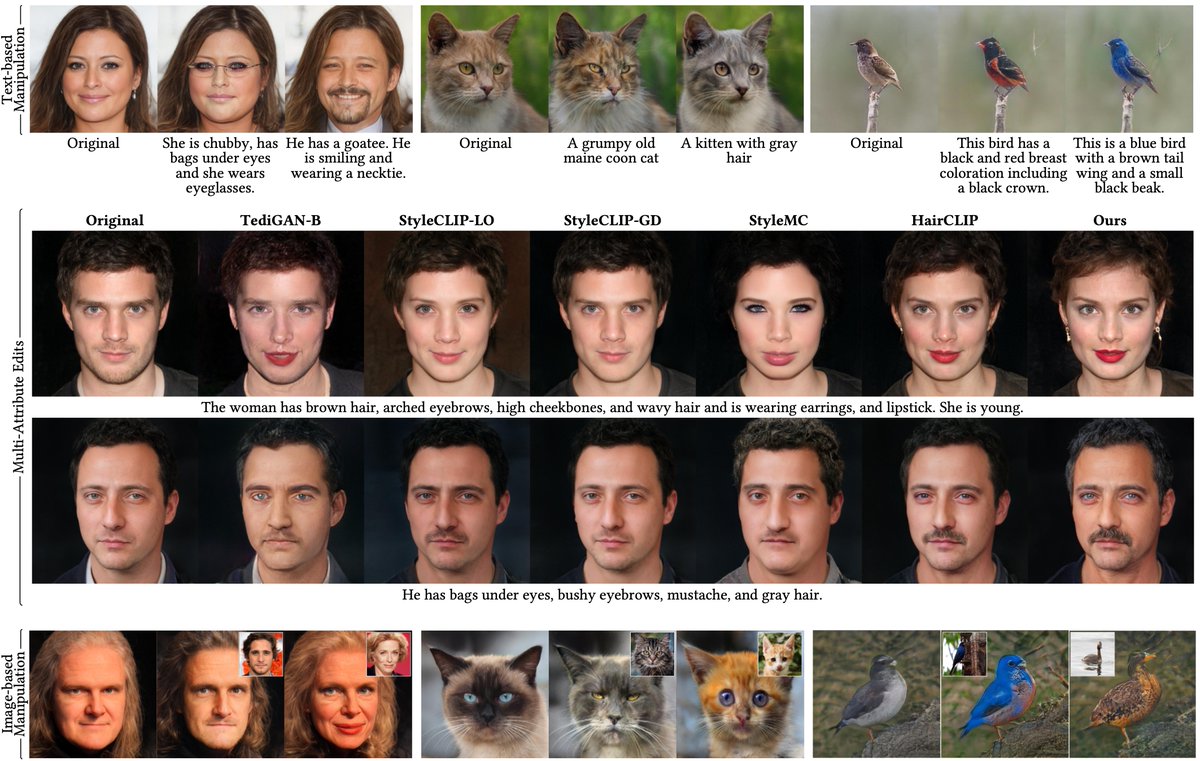

🎉 Exciting news! Our paper “CLIP-Guided StyleGAN Inversion for Text-Driven Real Image Editing" has been accepted to ACM Trans. on Graphics and will be presented at SIGGRAPH Asia ➡️ Hong Kong 2023. Co-authored with Canberk Baykal, Abdul Basit Anees, Duygu Ceylan, Erkut Erdem and Deniz Yuret. 1/4

Congratulations to Emirhan Kurtuluş for this amazing work!

Joint work with Barış Batuhan Topal (Barış Batuhan Topal), Prof. Deniz Yuret (Deniz Yuret). arxiv.org/abs/2211.10641

📢✨Next Tue (Nov 14) at 10:00 am, we'll have Kyunghyun Cho ( Kyunghyun Cho ) *in person* at KUIS AI Center, Koç University: "Beyond Test Accuracies for Studying Deep Neural Networks" for registration and details: [email protected] or just DM! #kuisaitalks #ArtificialInteligence

youtu.be/p5xWWrS8XLA?si… Yapay zekanın geçmişi, bugünü ve geleceği Prof. Dr. Deniz Yüret Koç Üniversitesi Öğretim Üyesi | İş Bank.Yapay Zeka Uygulama ve Araştırma Merkezi Müd. studioberlin Koç Üniversitesi #yapayzeka #DeepLearning #DenizYüret Deniz Yuret KUIS AI @kuisaicenter

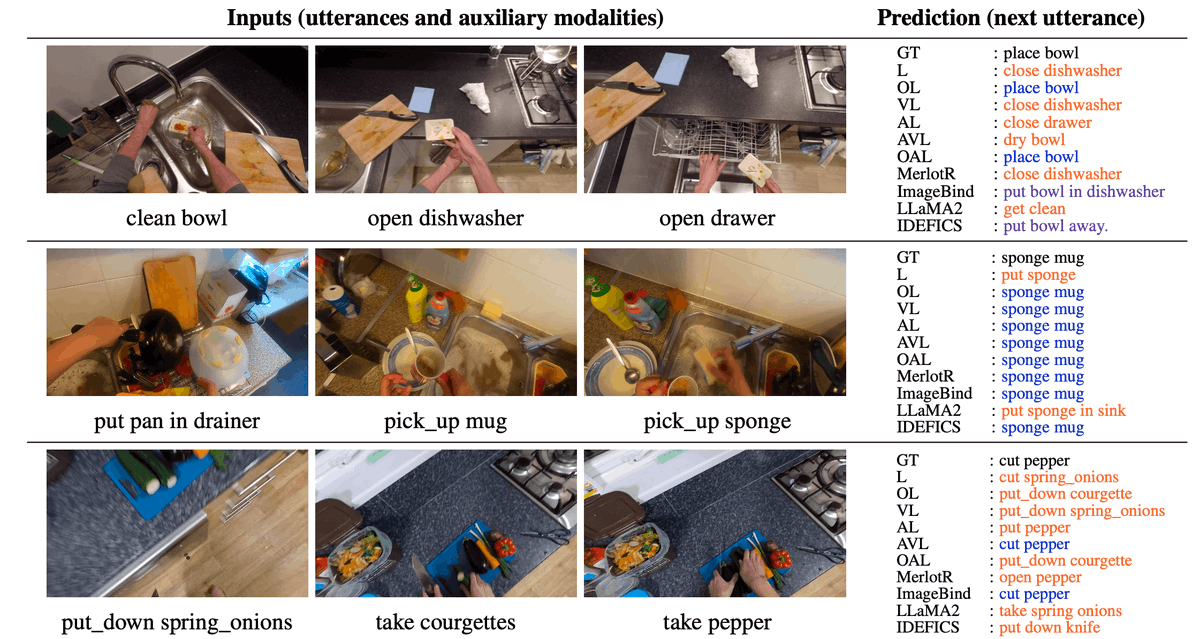

1. 🧵🎉 Excited to share that our paper "Sequential Compositional Generalization in Multimodal Models" is accepted as a long paper at #NAACL2024! 🌟 We'll be presenting our findings in Mexico City this June (NAACL HLT 2025). Dive into the full details here 👇 - Paper:

🎉 Excited to share our new work: “Bridging the Bosphorus: Advancing Turkish Large Language Models through Strategies for Low-Resource Language Adaptation and Benchmarking”! #AI #NLProc #TurkishNLP 🇹🇷 🚀 📄Paper: arxiv.org/abs/2405.04685 🌐Website: emrecanacikgoz.github.io/Bridging-the-B… (1/7)

Türkiye Yapay Zeka İnisiyatifi’nin düzenlediği 7. TRAI Mayıs Çalıştayı’nda çok keyifli bir panelde buluştuk. Deniz Yuret Sabri Gökmenler Halil Aksu Türkiye Yapay Zeka İnisiyatifi #yapayzeka #AI

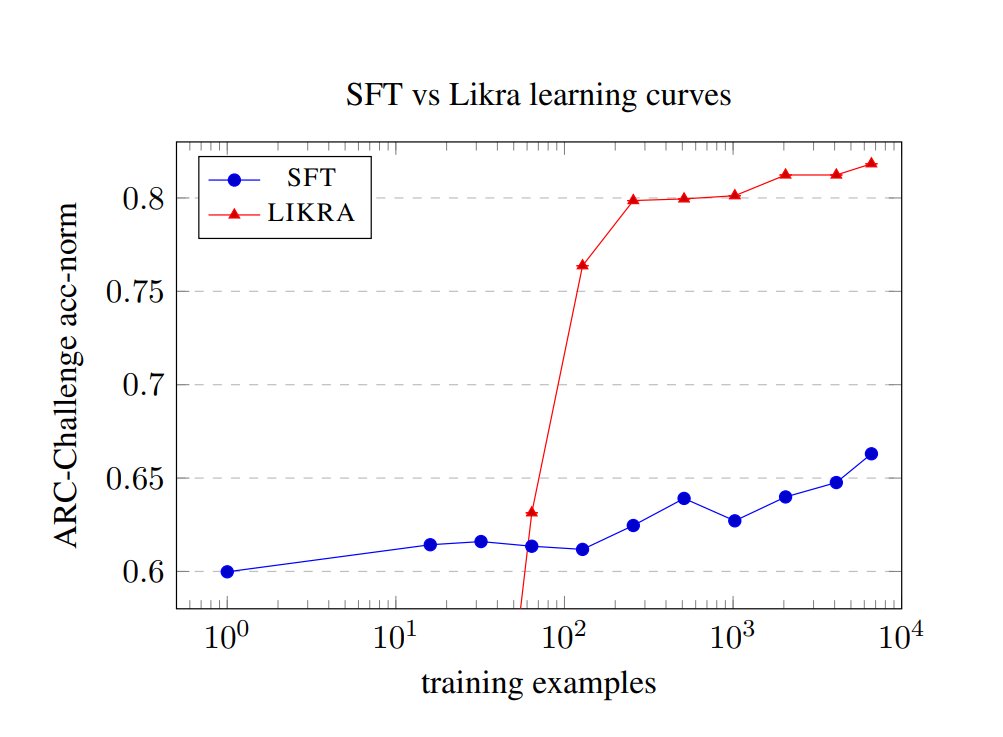

Have you ever seen a learning curve that looks like a step function? It turns out a few hundred negative examples flips a switch inside an LLM and gives a discrete jump in accuracy. "How much do LLMs learn from negative examples?" (arxiv.org/abs/2503.14391) with Shadi Hamdan.