Tsun-Yi Yang 楊存毅 🇹🇼🏳️🌈

@shamangary

A proud Taiwanese boy. @RobinAI_UK LLM research engineer. Ex-Meta. PhD in computer vision at National Taiwan University (NTU)

ID: 1537755079

22-06-2013 02:05:34

1,1K Tweet

471 Followers

656 Following

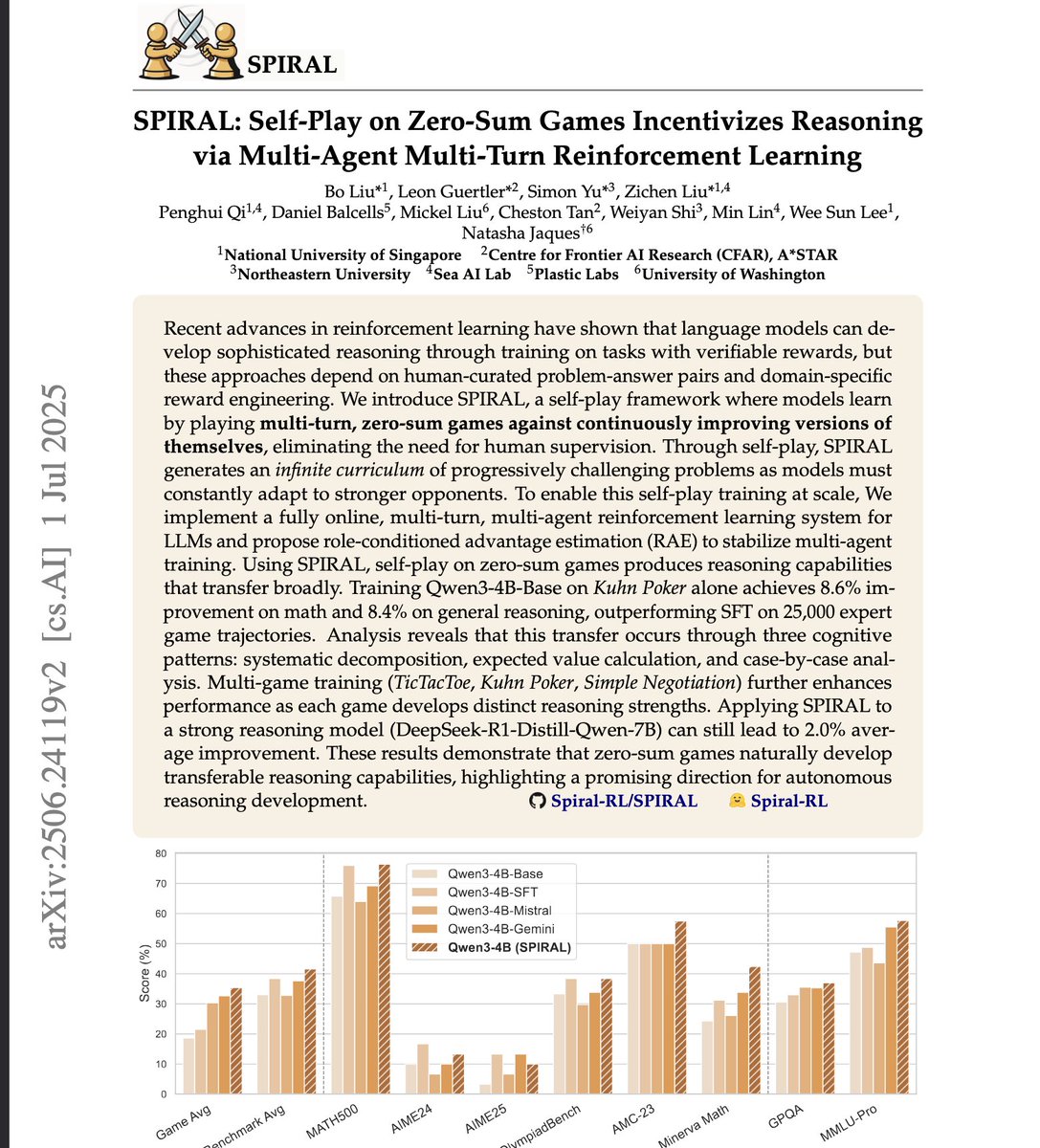

sorry Meta "superintelligence" lab but Andrew Zhao et al. did this better and you don't cite them. Actually, many have (eg Bo Liu (Benjamin Liu)), whether joint maximization or minimax, verifiers or RMs. Why kang of a breakthrough? Good job evaluating on Vicuna tho, peak 2023 llamacore