Njdeh Satourian

@satourian

Thinking step-by-step at @cerebrassystems

ID: 1292764709473714177

10-08-2020 10:08:43

178 Tweet

300 Followers

1,1K Following

Cerebras is now the🥇inference provider on Hugging Face serving 5M monthly requests

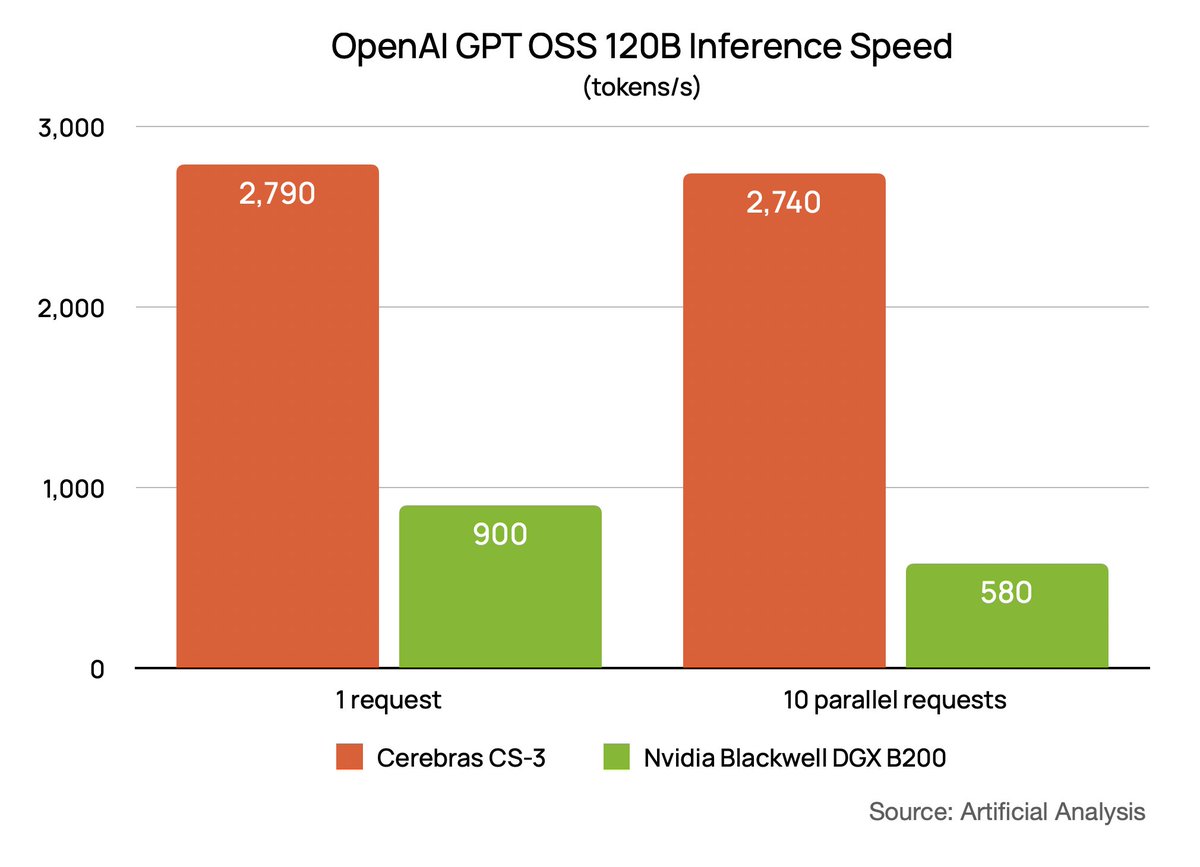

The first Nvidia Blackwell results for GPT OSS 120B are out! Artificial Analysis ran Cerebras vs. Nvidia head to head: Blackwell DGX B200: 1 user = 900 TPS, 10 users = 580 TPS Cerebras CS-3: 1 user = 2790 TPS, 10 users = 2740 TPS Also, Cerebras is live in production.

Cerebras Inference powers the world's top models - like Qwen3 Coder at 2000 tokens/s 🤯 And you can now access these models directly in Visual Studio Code with the extension and an API key (that you can get for free from cerebras.ai!) aka.ms/VSCode/Cerebras

Pay-as-you-go is now available on Amazon Web Services Marketplace. Use your AWS account—no upfronts, no lock-ins—to serve frontier models 20x faster than leading GPUs.