JSALT 2022 - pre-training team

@jsalt_pretrain

Please follow the Twitter account of the pre-training team at JSALT 2022. The team will tweet our new findings here.

ID: 1528250731203284992

https://jsalt-2022-ssl.github.io/ 22-05-2022 05:45:59

48 Tweet

122 Followers

0 Following

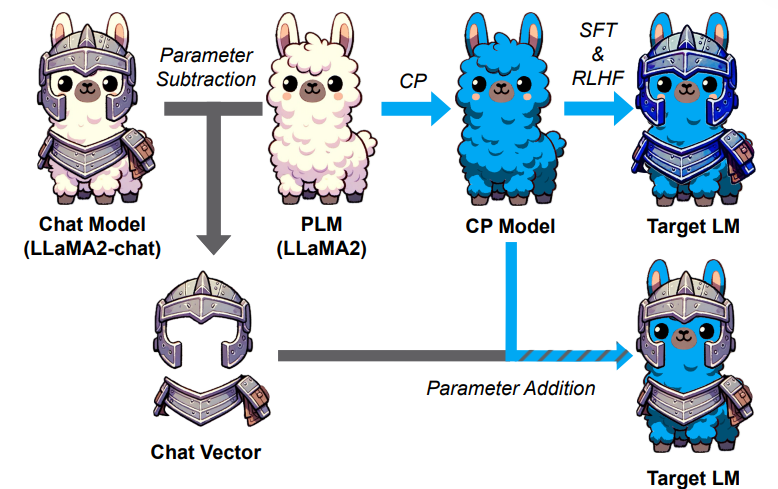

🎉🌱In the early stages of my research journey, I'm humbly honored to receive the Google PhD Fellowship🏆 So much more to learn, discover, and explore in this exciting path🚀 🙏 Infinite thanks to my advisor, Hung-yi Lee (李宏毅) , for his guiding light. This can't happen without him