Shijie Wu

@ezrawu

MTS @AnthropicAI. PhD at @jhuclsp. ex @Bloomberg AI/@AIatMeta. He/Him. Opinions are my own. DM open. Threads @ezra_wu

ID: 18045865

11-12-2008 11:55:57

217 Tweet

1,1K Followers

1,1K Following

Scaling LMs works well. Is more parameters and data all it takes, or do certain architectural features or language styles bring out emergent abilities sooner? Let’s investigate by seeing what it takes for syntax 🌳 to emerge! At ACL! w/ Tal Linzen 📜 arxiv.org/abs/2305.19905

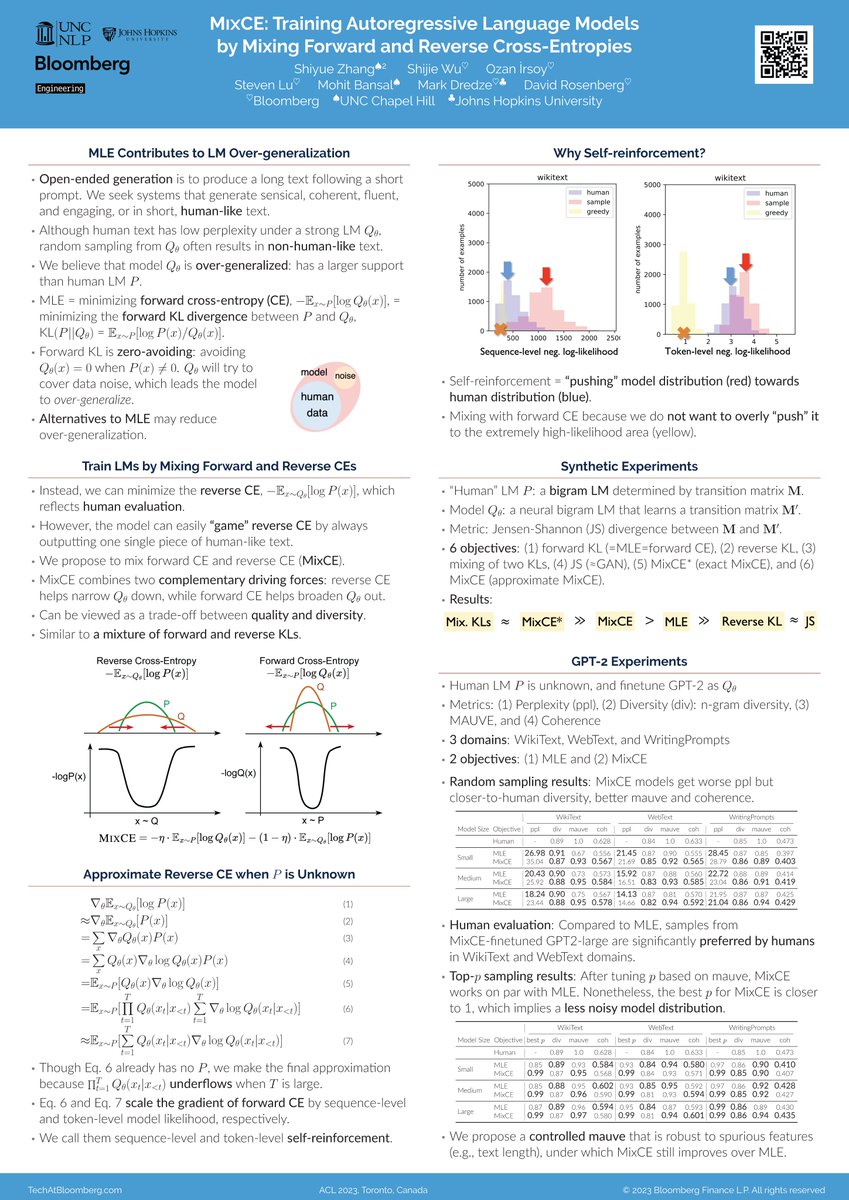

The poster for "MixCE: Training Autoregressive Language Models by Mixing Forward and Reverse Cross-Entropies," joint work by Shiyue Zhang, Shijie Wu, @oirsoy, Steven Lu, Mohit Bansal, Mark Dredze & David Rosenberg is being presented in Poster Session 2 (2 PM EDT) at ACL 2025 #ACL2023NLP #NLProc

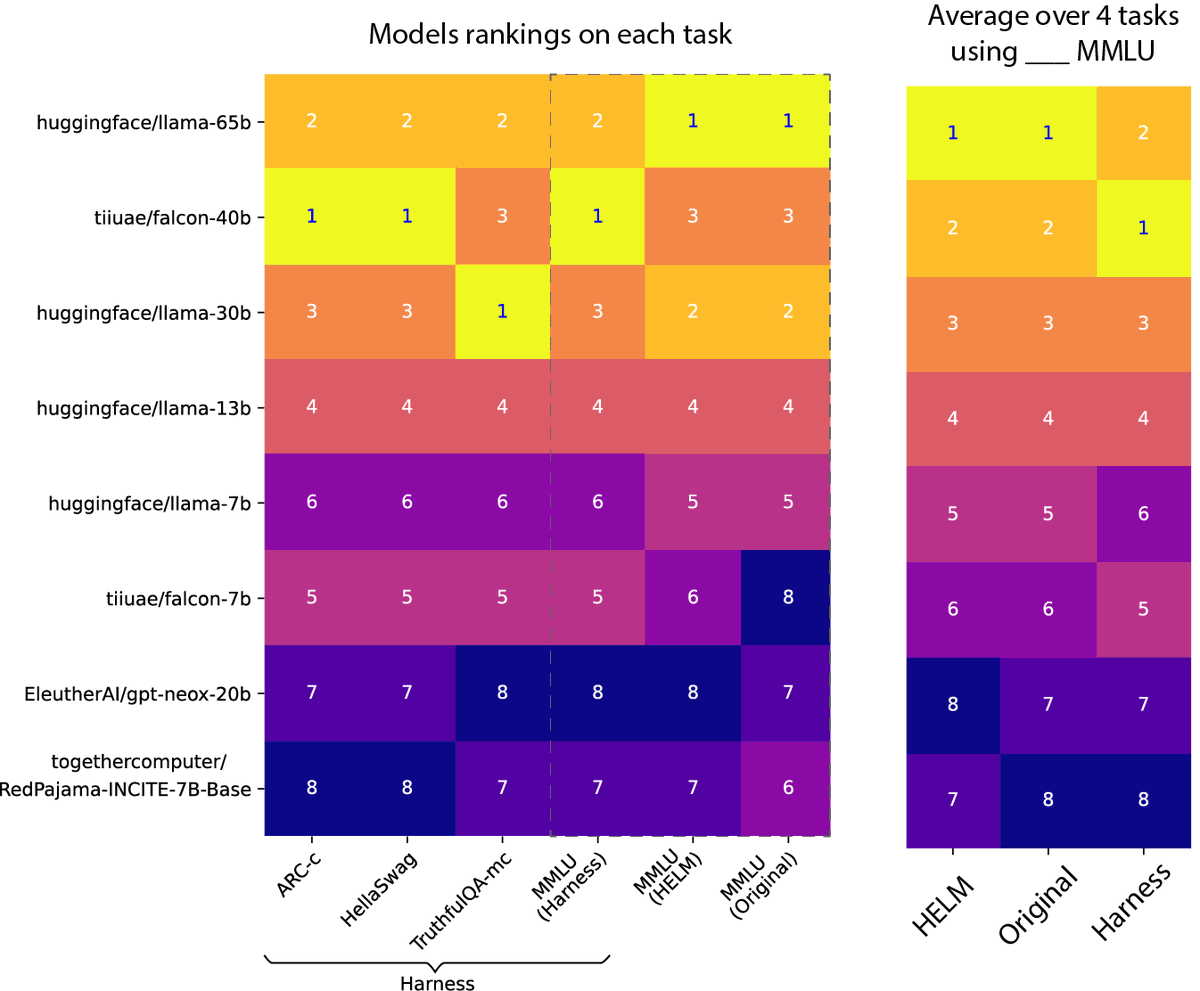

Some excellent work by Jean Kaddour and colleagues arxiv.org/abs/2307.06440 “We find that their training, validation, and downstream gains vanish compared to a baseline with a fully-decayed learning rate” ☠️

![Shiyue Zhang (@byryuer) on Twitter photo Find LMs trained by MLE “over-generalize” and produce non-human-like text? Try out our MixCE objective!

See our #ACL2023nlp paper “MIXCE: Training Autoregressive Language Models by Mixing Forward and Reverse Cross-Entropies”

arxiv.org/abs/2305.16958

github.com/bloomberg/mixc…

[1/9] Find LMs trained by MLE “over-generalize” and produce non-human-like text? Try out our MixCE objective!

See our #ACL2023nlp paper “MIXCE: Training Autoregressive Language Models by Mixing Forward and Reverse Cross-Entropies”

arxiv.org/abs/2305.16958

github.com/bloomberg/mixc…

[1/9]](https://pbs.twimg.com/media/FxTlZHoaQAI-Rky.jpg)