exXross

@ex_xross

ex researcher post human era \r&t

reposts and thoughts that are helpful to me

web3.0, dao, rm, meta-narrative

ID: 945374736246296576

25-12-2017 19:24:36

3,3K Tweet

92 Followers

1,1K Following

Skill evolution is the future. Check out Skill Evolve

This is why Andrej Karpathy will go into history books as one of the most consequential minds in AI of our time. 243 lines of ruthless compression but a FULL training + inference loop for autoregressive transformer. I feel this is also such a genius, quiet defiance of the “AI is

The dark side of reinforcement learning Olive Song, senior researcher at MiniMax (official), about RL models that try to hack rewards and why alignment fails in practice This conversation is an inside look at how Chinese AI labs move fast – testing new models overnight, debugging

Another banger from Idan Attias.

Actually sad, actually a good piece of writing. I don’t think it’ll be fiction for too long, and I think openforage could solve this.

In biology fundamental systems are still coming to light. 3 years ago the Breakthrough recognized the discovery of liquid condensates, a new system of cellular organization. Now it turns out they enable a previously unknown mode of electrical transmission inside cells.

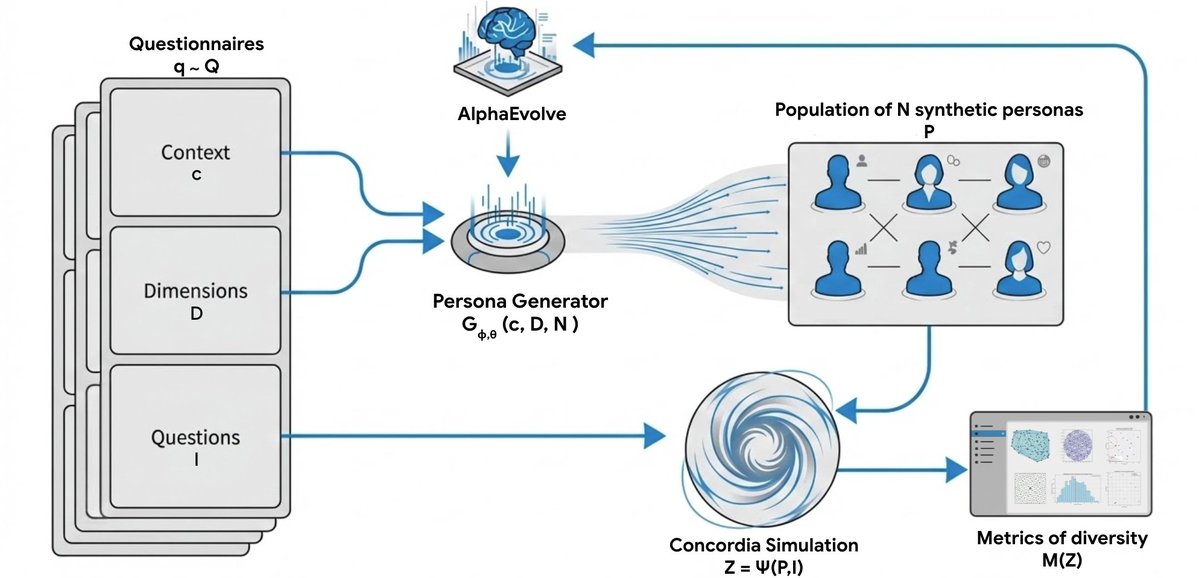

🧬 New paper from my internship at Google DeepMind We introduce Persona Generators: functions that generate diverse synthetic populations for arbitrary contexts. We use AlphaEvolve to optimize the generator code, hill-climbing on diversity metrics — not just likelihood —

“Brains are not just learners; they are architectures of internal teachers. We should try to find those teachers in the brain — and learn from them.” AI may have more to learn from biological intelligence. Great read from Adam Marblestone highlighting work from Steven Byrnes