Blake Mizerany

@bmizerany

Engineer @ollama.

Previously Songbird, early @heroku, early @CoreOS, founder of Backplane (not Lady Gaga’s), @grax, founder @tierrun

ID: 2379441

27-03-2007 00:38:07

4,4K Tweet

3,3K Followers

671 Following

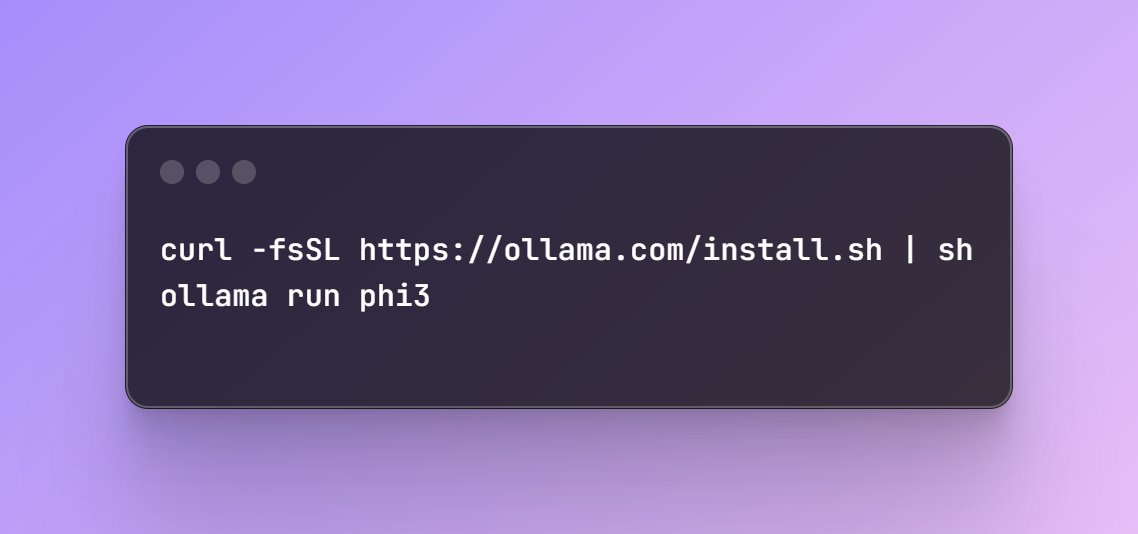

.Mistral AI's Mixtral 8x22B Instruct is now available on Ollama! ollama run mixtral:8x22b We've updated the tags to reflect the instruct model by default. If you have pulled the base model, please update it by performing an `ollama pull` command.

We need your help test a new backend performance improvement! 1. Pull a model you don't have yet (or remove it): examples: ollama pull issue1736.ollama.dev/library/llama3… ollama pull issue1736.ollama.dev/library/gemma:… ollama pull issue1736.ollama.dev/library/mistral ollama pull issue1736.ollama.dev/library/llava-… ollama

Pretty sure those are TOTO USA Inc. water cannons. x.com/MKBHD/status/1…

ollama run qwq If you have previously downloaded the QwQ preview model, please update directly via: `ollama pull qwq`. Thank you Junyang Lin Binyuan Hui. Let's go!