Adriano Fragomeni

@adrifragomeni

PhD Graduate Student in Computer Vision @BristolUni @VILaboratory

ID: 831843889019547648

https://adrianofragomeni.github.io/ 15-02-2017 12:33:32

9 Tweet

24 Followers

62 Following

Read about Bristol's part in the #Ego4D project through our press release @BristolUniEng bristol.ac.uk/news/2021/octo… Check Interactive Webpage: ego4d-data.org Big shoutout to team: Michael Wray, Will Price, Jonathan Munro and Adriano Fragomeni

📢[Pls RT] Used EPIC-KITCHENS in ur work? Contribute to our impact anonymous survey: surveymonkey.co.uk/r/epic_kitchens We are collecting data on usage from academia/industry as well as type of data used: narrations/action segments. Will u use our upcoming pixel-level segmentations?? LUK!

Congratulations to all winners, On behalf of myself and this year's challenges team: Giovanni M Farinella Antonino Furnari Davide Moltisanti Daniel Whettam Adriano Fragomeni Toby Perrett, Bin Zhu, Michael Wray

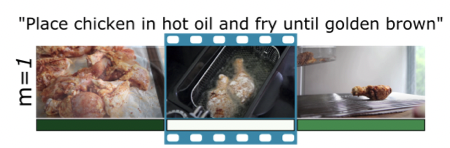

[Today on ArXiv]: our ACCV 2022 Oral *ConTra* Learning-to-attend to local temporal context for disambiguating cross-modal retrieval. E.g. retrieving “divide the dough in 2” benefits from attending to clips w/ dough stretched. ArXiv: arxiv.org/abs/2210.04341 adrianofragomeni.github.io/ConTra-Context…

ConTra focuses on untrimmed videos with large # of clips/video - where video-paragraph approaches are unhelpful. SOTA clip retrieval. Work by Adriano Fragomeni w Michael Wray @BristolUniEng E.g.2 retrieving "frying chicken" benefits from attending to prior clip where chicken is visible

🎄🥘 Xmas meal 2022 for #MaVi group Bristol School of Computer Science @BristolUniEng was loads of fun. 🎅 took note of our wishes for 2023. Keeping fingers crossed🤞for papers🗃️under review or ongoing works. I'd like to take the opportunity to thank everyone for their hard work. Highlights of 2022 in🧵