Bhargavi Paranjape

@bvp22294

Research @ Meta

ID: 2268381841

https://bhargaviparanjape.github.io/ 30-12-2013 05:32:11

73 Tweet

604 Takipçi

498 Takip Edilen

Check out ✨Husky✨, Joongwon Kim's new work on open-source LM agents for multi-step reasoning + tool-use! 📄 Paper: arxiv.org/abs/2406.06469 📷 Code: github.com/agent-husky/Hu…

So happy our new multilingual benchmark MultiLoKo is finally out (after some sweat and tears!) arxiv.org/abs/2504.10356 Multilingual eval for LLMs... could be better, and I hope MultiLoKo will help fill some gaps in it + help study design choices in benchmark design AI at Meta

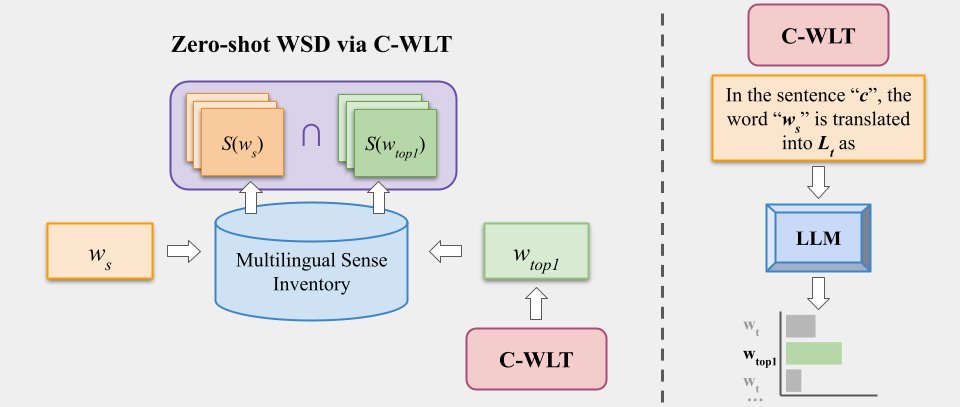

![Weijia Shi (@weijiashi2) on Twitter photo Ever wondered which data black-box LLMs like GPT are pretrained on? 🤔

We build a benchmark WikiMIA and develop Min-K% Prob 🕵️, a method for detecting undisclosed pretraining data from LLMs (relying solely on output probs).

Check out our project: swj0419.github.io/detect-pretrai…

[1/n] Ever wondered which data black-box LLMs like GPT are pretrained on? 🤔

We build a benchmark WikiMIA and develop Min-K% Prob 🕵️, a method for detecting undisclosed pretraining data from LLMs (relying solely on output probs).

Check out our project: swj0419.github.io/detect-pretrai…

[1/n]](https://pbs.twimg.com/media/F9YvXJnacAAKvwl.jpg)