Kazz

@kazzorr_

math, physics, AI/ML

ID: 1970150615881048064

22-09-2025 15:38:39

1,1K Tweet

49 Takipçi

103 Takip Edilen

You can now train Qwen3.5 with RL in our free notebook! You just need 8GB VRAM to RL Qwen3.5-2B locally! Qwen3.5 will learn to solve math problems autonomously via vision GRPO. RL Guide: unsloth.ai/docs/get-start… GitHub: github.com/unslothai/unsl… Qwen3-4B: colab.research.google.com/github/unsloth…

Andrej Karpathy The LiteLLM dependency incident didn't "just happen" though. This is part of a larger campaign LiteLLM already extends to supply chain security fallout for other projects: snyk.io/articles/poiso…

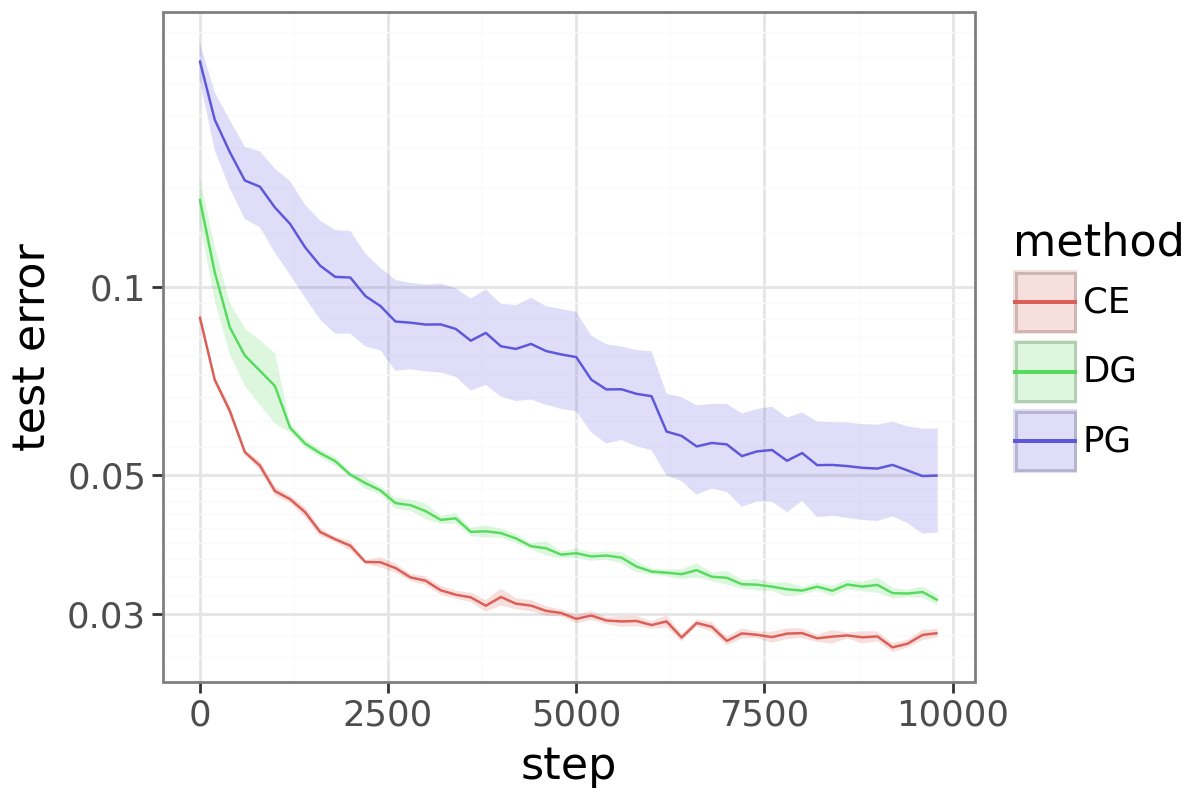

nanoGPT by Andrej Karpathy is still the most relevant reference to hack and learn if someone is starting out in ai research. i tried to look (been a longtime!) what all work has been done to beat the baseline: > Architectural modernization (RoPE, QK-norm, ReLU, RMSnorm etc) >