Everlier

@everlier

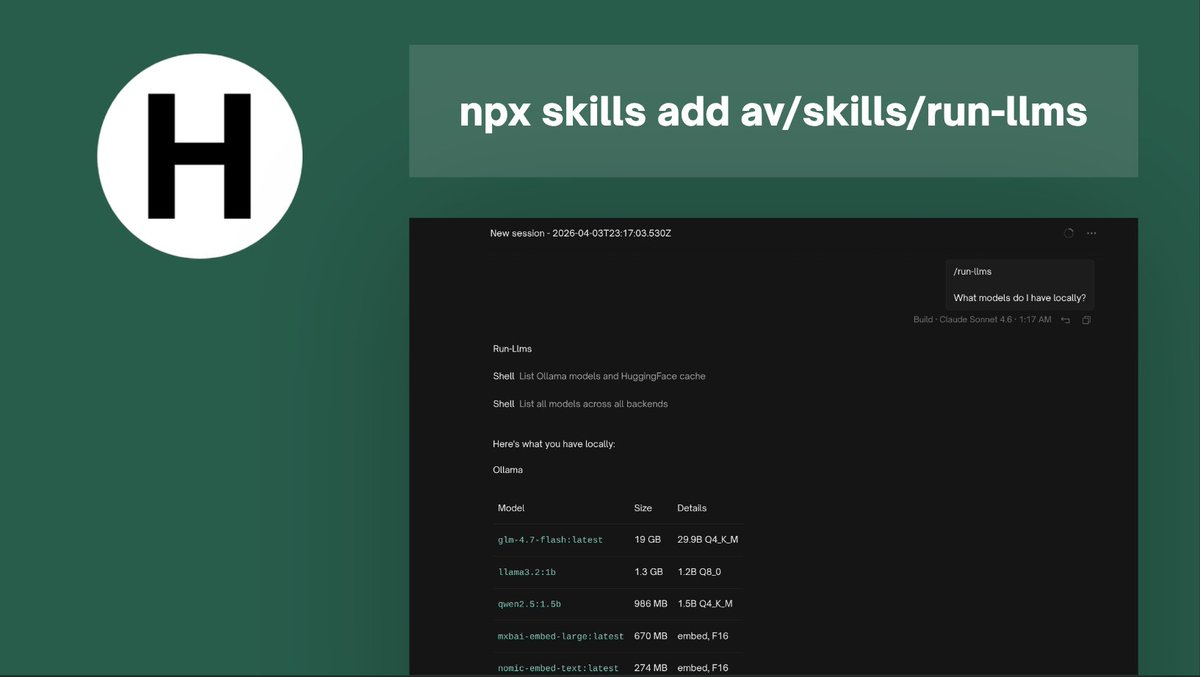

Building LLM agents & tools

openguard.sh

github.com/av/harbor

remotion-bits.dev

@jitera_official

ID: 133797694

http://av.codes 16-04-2010 17:10:30

6,6K Tweet

828 Takipçi

450 Takip Edilen

Google DeepMind Such a great release. Each of the models is interesting in its own way. 31B - packing what was recently frontier capability (~Sonnet 4 level) into such a small size, great response to the Qwen 3.5 27B 26B - great for actual use on most of consumer devices, to power up all the

Harveen Singh Chadha Nobody noticed because those MAI models are for transcription and image generation and only available via API, so ofc Gemma 4 took the scene with Apache 2.0 :)

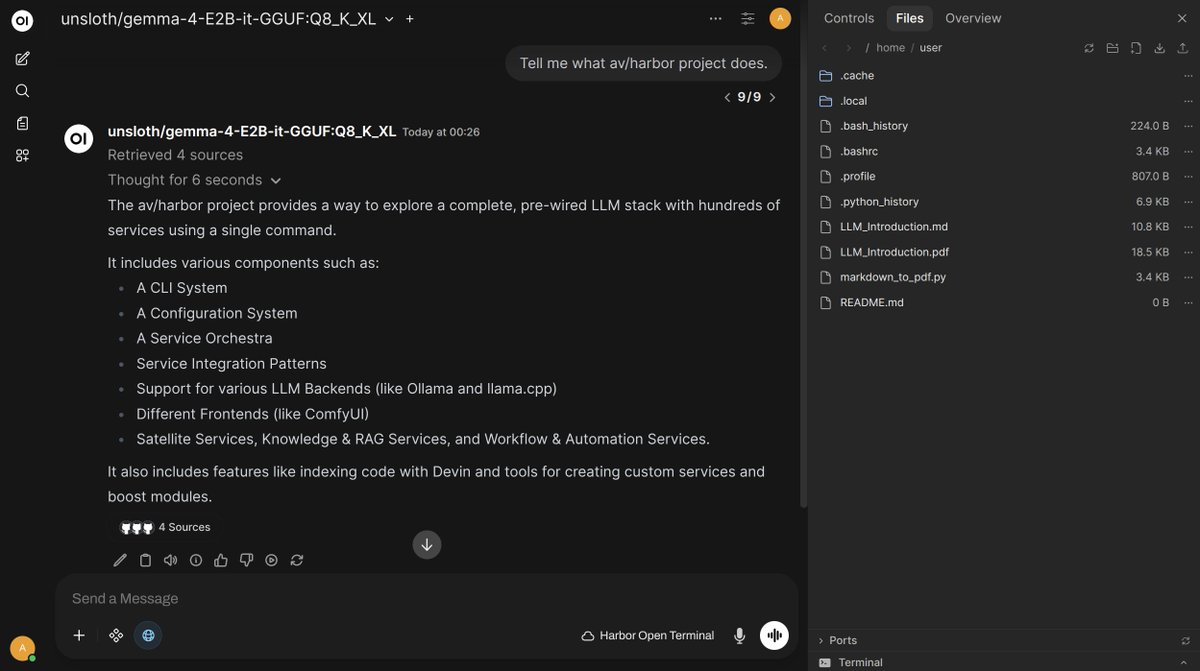

David Ondrej How about this: ``` harbor pull gemma4 harbor up searxng openterminal harbor open ``` Now you have Open WebUI + Web RAG + persistent sandbox for the LLM to use to work with files, all containerized without risking your host if a clanker goes wild :)