David Pissarra

@davidpissarra

PhD Student @NYU_Courant | MSc @istecnico @Tsinghua_Uni | prev: Research Intern @CSDatCMU

ID: 1213860292330848261

http://davidpissarra.com 05-01-2020 16:30:52

14 Tweet

114 Takipçi

133 Takip Edilen

Exciting news: StreamingLLM is now available on iPhone! 🎉 A huge thanks to David Pissarra for his fantastic extension to our work. Can't wait to explore the possibilities with StreamingLLM!

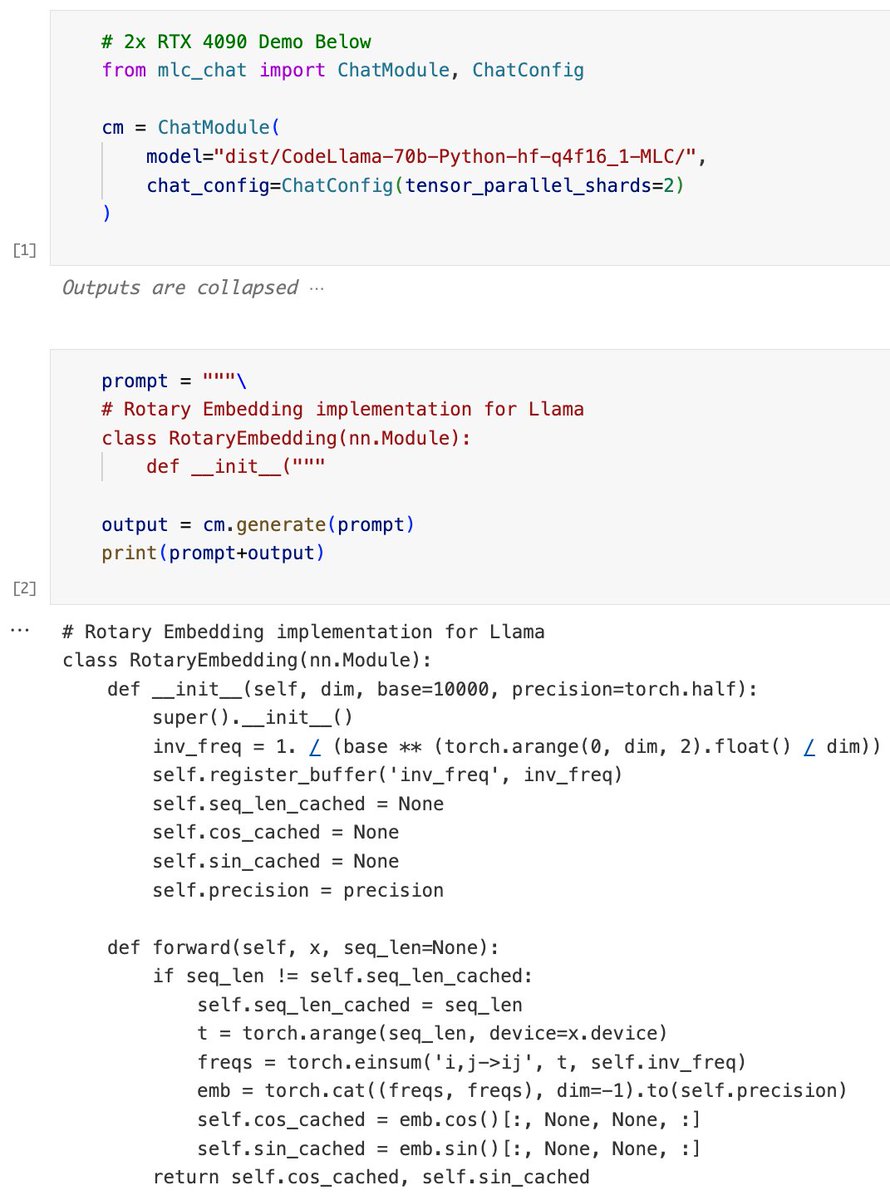

Run Gemma model locally on iPhone - we get blazing fast 20 tok/s for 2B model. This shows amazing potential ahead for Gemma fine-tunes on phones, made possible by the new MLC SLM compilation flow by Junru Shao from octoaicloud and many other contributors. github.com/mlc-ai/mlc-llm

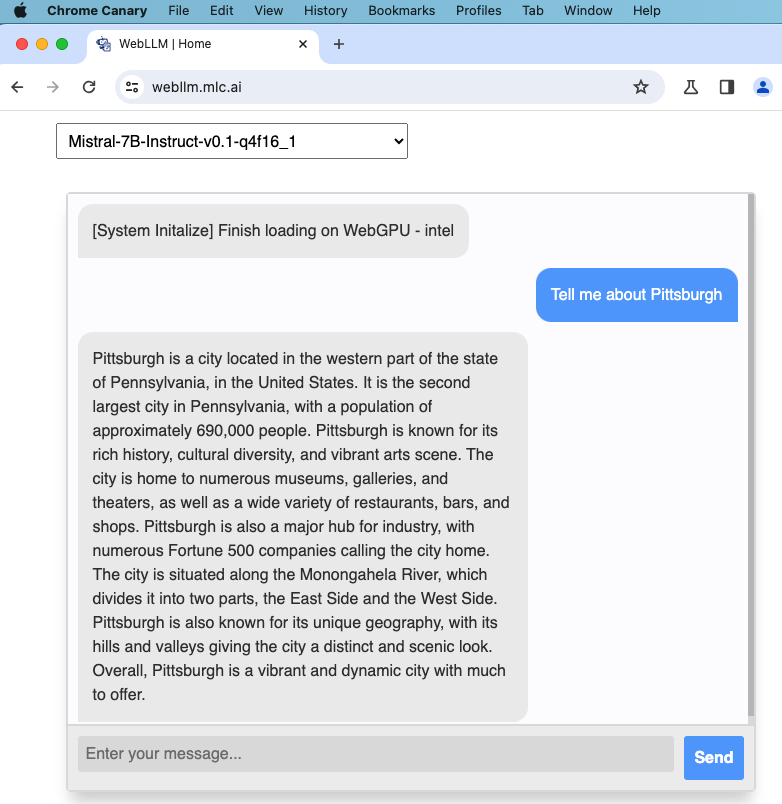

webllm.mlc.ai now adds Gemma from Google DeepMind! The 2b model is perfect for building in-browser agents with WebGPU acceleration -- everything local! Here is a 1x speed demo of 4-bit quantized gemma-2b-it on Google Pixel 7 Pro with Chrome.