chuyi shang

@chuyishang

CS + Econ @UCBerkeley | research @BerkeleyML @Berkeley_AI | big food, music, and wikipedia enthusiast

ID: 3818624775

29-09-2015 22:36:25

75 Tweet

104 Takipçi

579 Takip Edilen

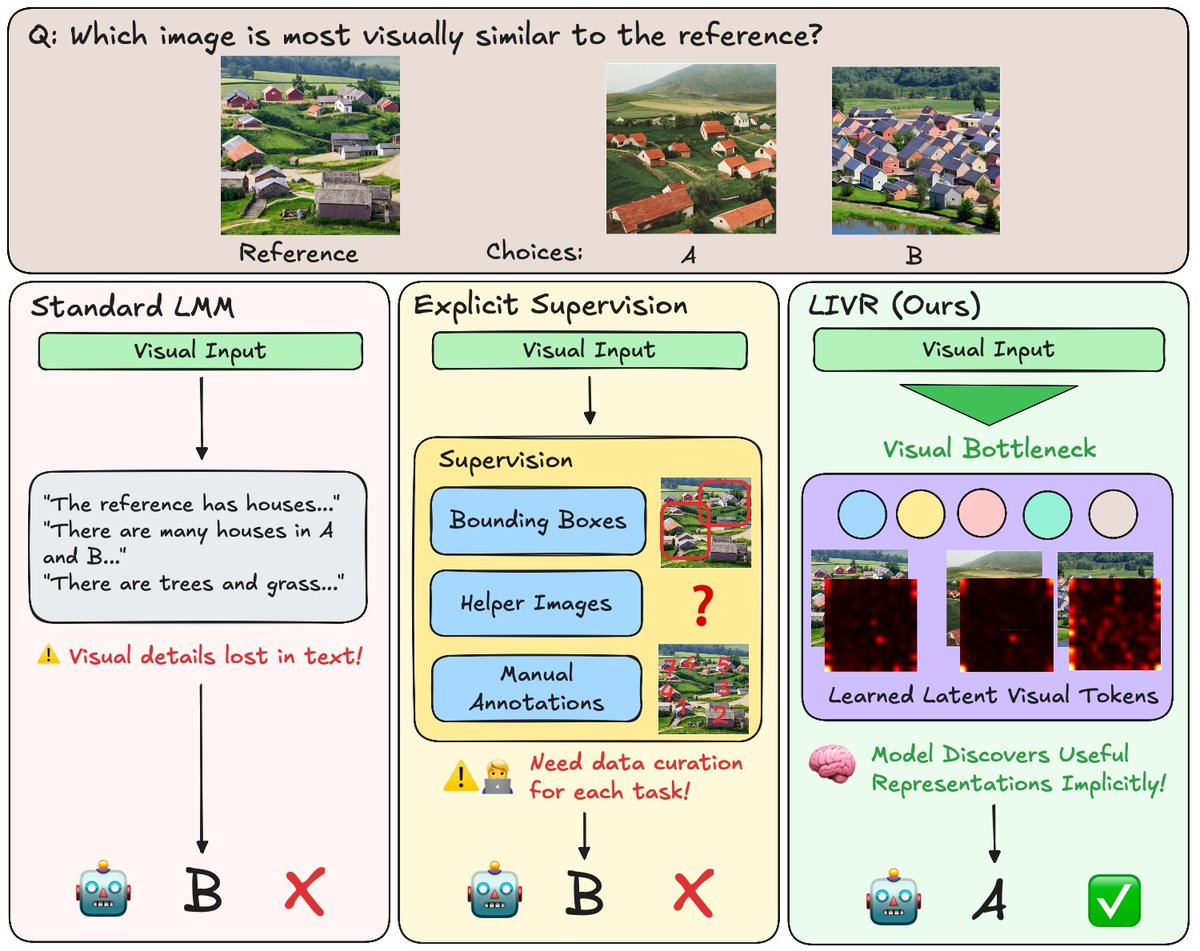

Happy to share that our 𝐌𝐮𝐥𝐭𝐢𝐦𝐨𝐝𝐚𝐥 𝐀𝐠𝐞𝐧𝐭𝐢𝐜 work🤖 has been accepted to #EMNLP2024 Main conference! Hope to see everyone in Miami!🥳🌞🌅 Kudos to all authors and collaborators: chuyi shang, Amos You, Sanjay Subramanian, and trevordarrell.

i am once again asking Weights & Biases to make a phone app so that i can monitor the situation when i'm outside