Ali Fartoot

@ali_fartout

Machine Learning Engineer

ID: 1473192360016236548

21-12-2021 07:23:35

101 Tweet

43 Followers

451 Following

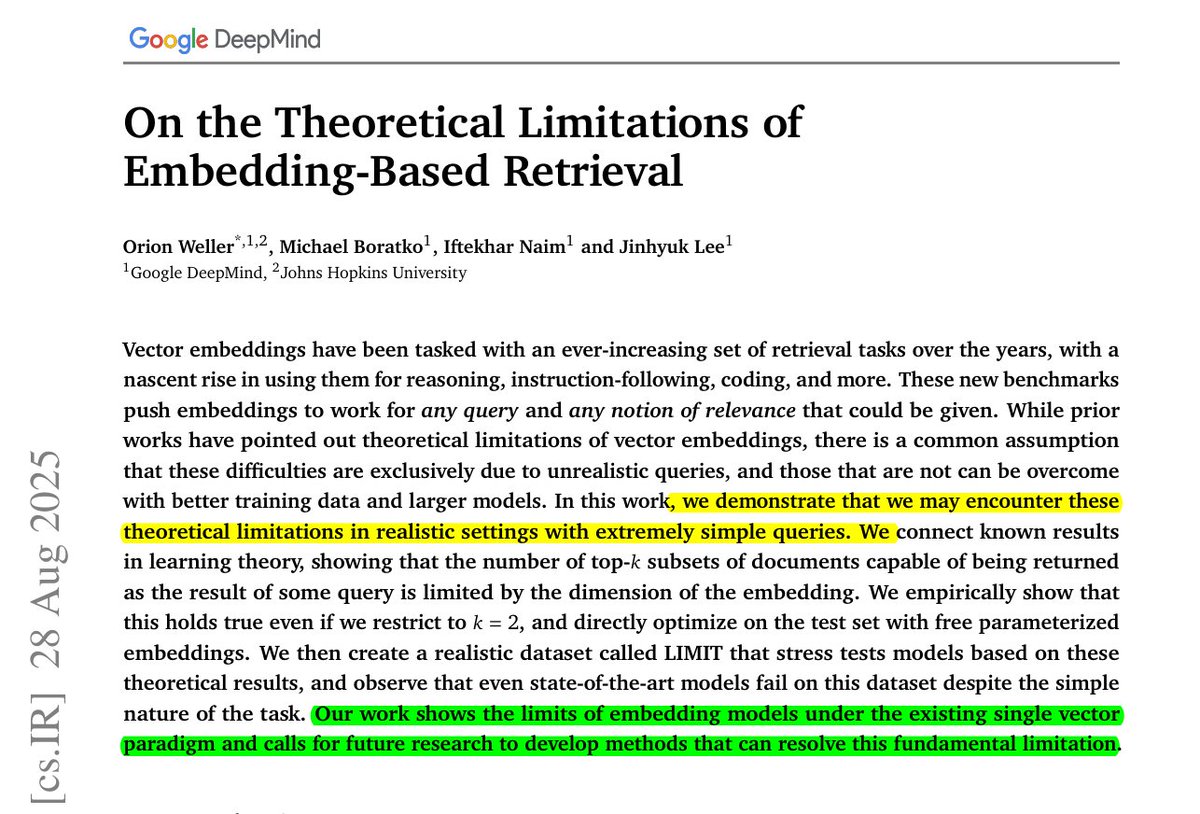

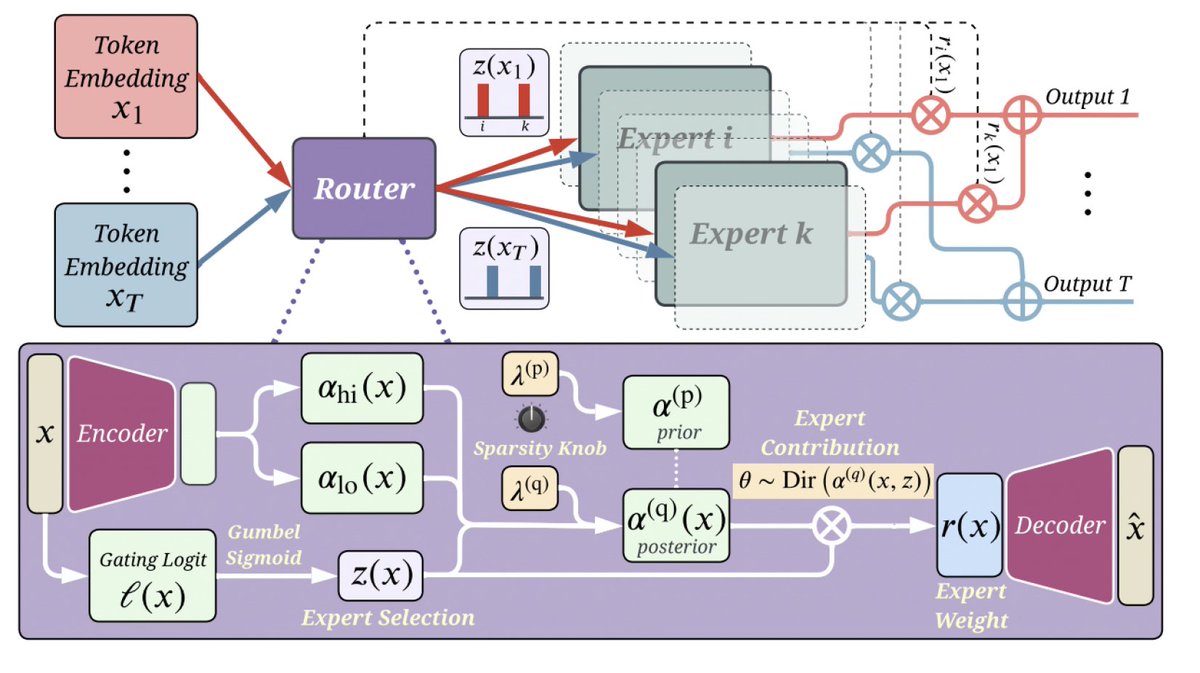

BRILLIANT Google DeepMind research. Even the best embeddings cannot represent all possible query-document combinations, which means some answers are mathematically impossible to recover. Reveals a sharp truth, embedding models can only capture so many pairings, and beyond that,