Zhenrui Yue

@yueeeeeeee2837

PhD @UofIllinois | Incoming @AIatMeta | Prev @GoogleDeepMind @NVIDIAAI @ETH @UCSD | Interested in LLM, IR & RecSys

ID: 1512988047322849280

http://yueeeeeeee.github.io 10-04-2022 02:57:27

163 Tweet

1,1K Followers

1,1K Following

Our first release is Gemini 3 Pro, which is rolling out globally starting today. It significantly outperforms 2.5 Pro across the board: 🥇 Tops LMArena and WebDev lmarena.ai leaderboards 🧠 PhD-level reasoning on Humanity’s Last Exam 📋 Leads long-horizon planning on Vending-Bench 2

We’re hiring to build AI agents that can see, think, understand, and generate video — from short clips to long coherent movies xAI. If you live for data, agents, and RL, and have a passion for short/long video generation, come ship the future with us! job-boards.greenhouse.io/xai/jobs/49751…

Sam Altman can we start getting free boba if we order through chatgpt

You're telling me that this model, which literally beats Gemini-3 Pro on Artificial Analysis, has just dropped on Hugging Face huggingface.co/zai-org/GLM-5

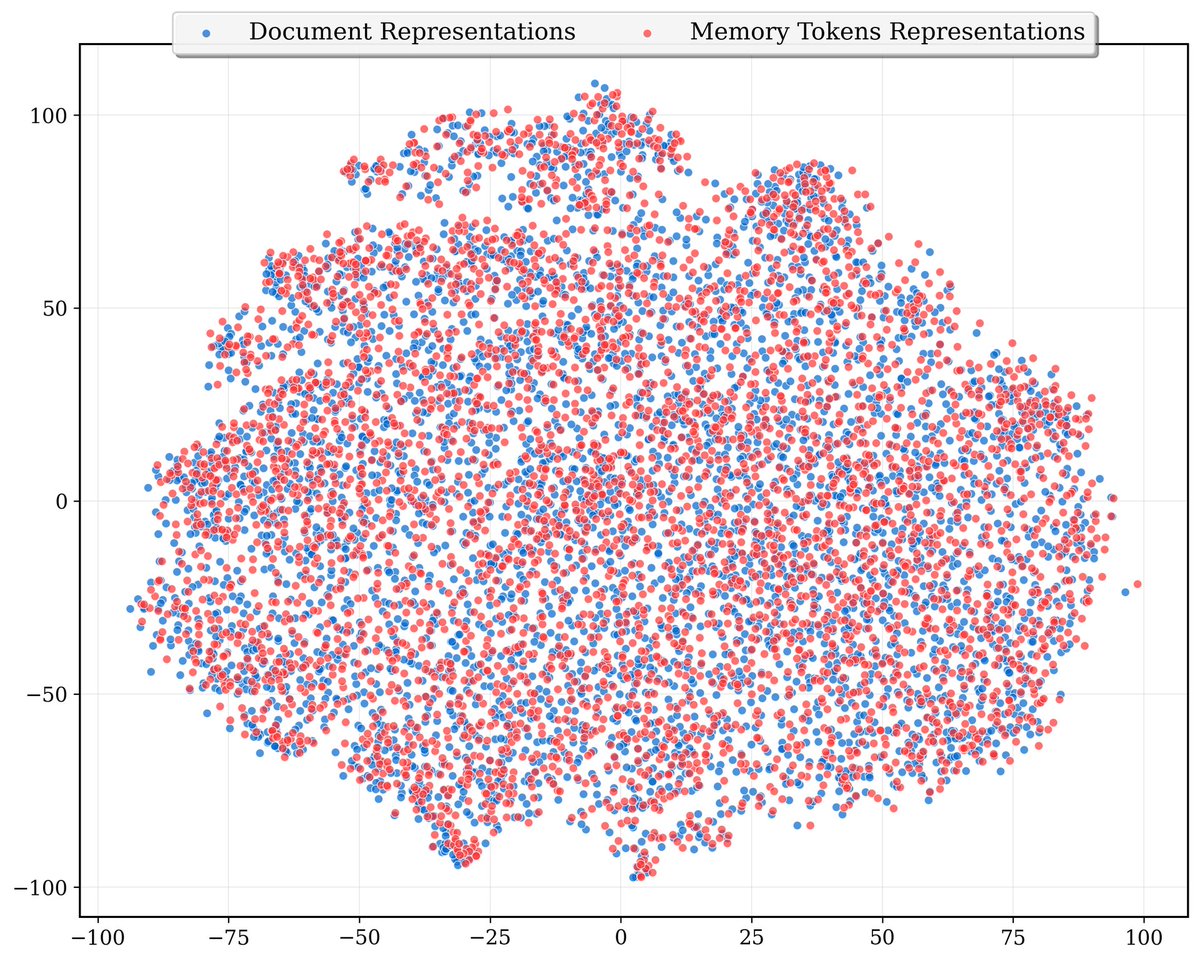

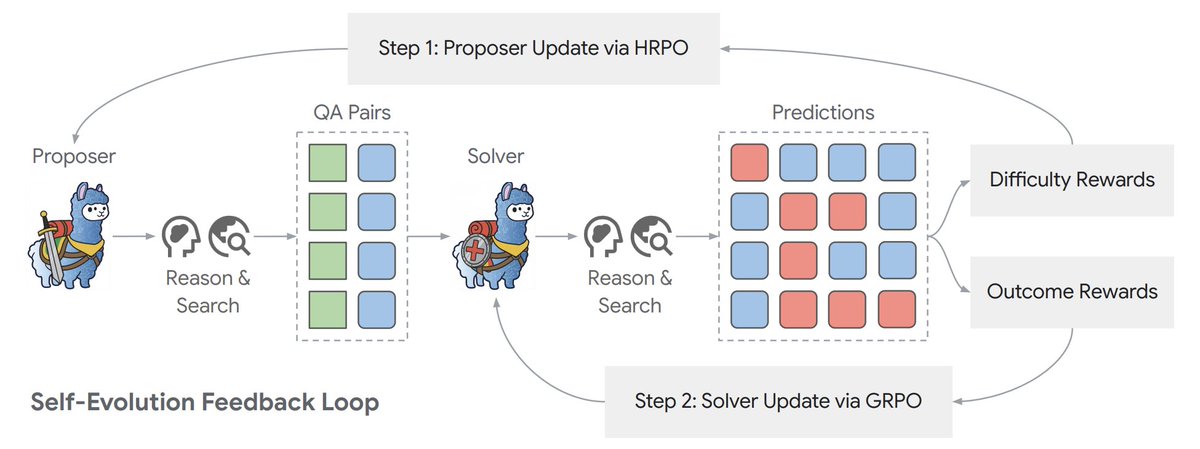

![fly51fly (@fly51fly) on Twitter photo [LG] Dr. Zero: Self-Evolving Search Agents without Training Data

Z Yue, K Upasani, X Yang, S Ge... [Meta Superintelligence Labs] (2026)

arxiv.org/abs/2601.07055 [LG] Dr. Zero: Self-Evolving Search Agents without Training Data

Z Yue, K Upasani, X Yang, S Ge... [Meta Superintelligence Labs] (2026)

arxiv.org/abs/2601.07055](https://pbs.twimg.com/media/G-kvAd2bQAAGv2H.jpg)