Victoria X Lin

@victorialinml

Research Scientist @AIatMeta | MoMa🖼 • RA-DIT🔍• OPT-IML

Ex: @SFResearch • PhD @uwcse

📜 threads.net/@v.linspiration 🌴 Bay Area

ID: 225054090

https://victorialin.org 10-12-2010 15:16:16

1,1K Tweet

3,3K Followers

884 Following

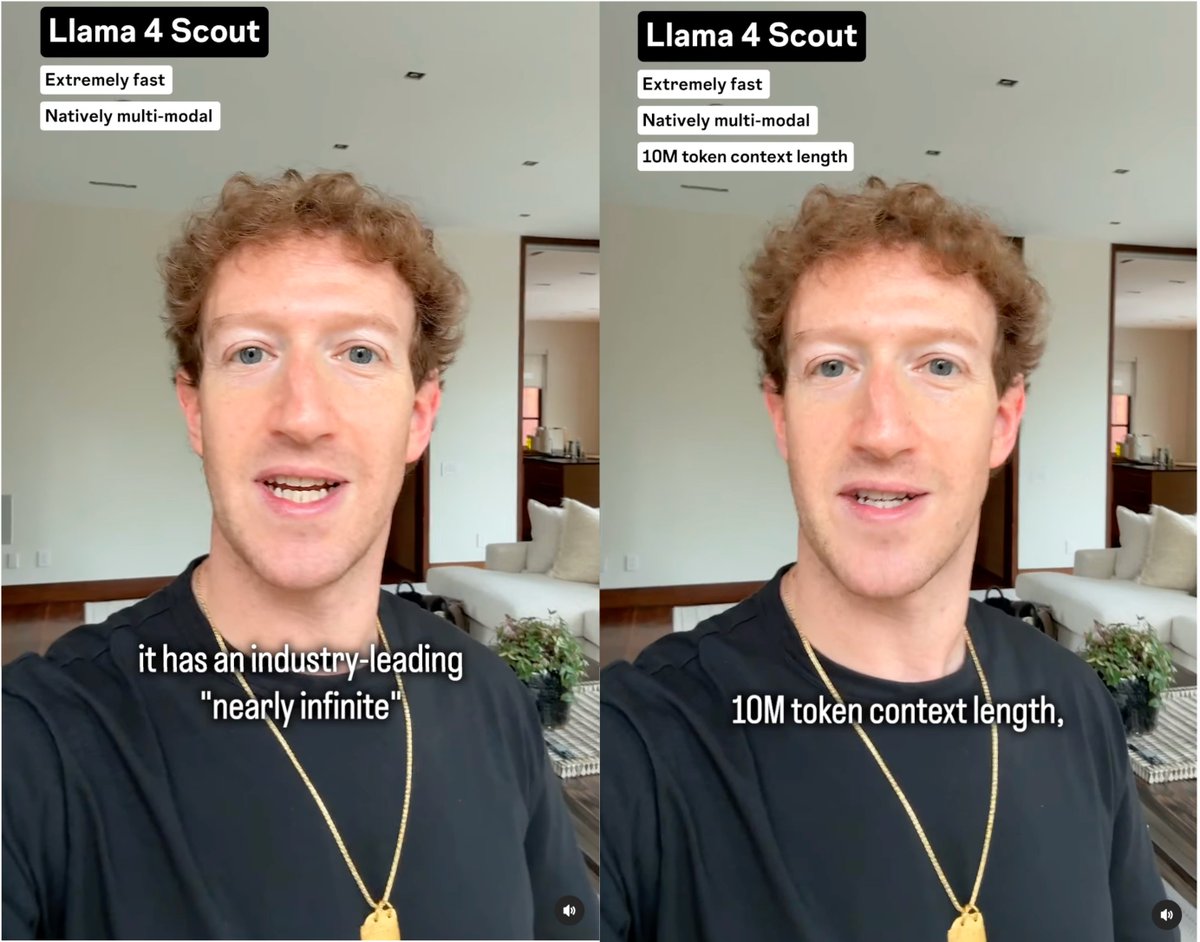

Victoria X Lin splitting transformer parameters by⭐Understanding (X→text) vs. 📷Generation (X→image) functionality. We already did that in LMFusion