Tae-Hyun Oh

@tae_hyun_oh

Associate professor @ KAIST

Former postdoc researcher @ Facebook AI Research

Former postdoc associate @ MIT CSAIL

ID: 1294636328487796741

15-08-2020 14:05:38

39 Tweet

74 Followers

117 Following

This work is a nice collaboration with GeonU Kim and Tae-Hyun Oh. GeonU, the 1st author, was just a 1st semester M.S. student when he started this project! His great efforts and productivity led to this amazing result 👏 Page: kim-geonu.github.io/FPRF/

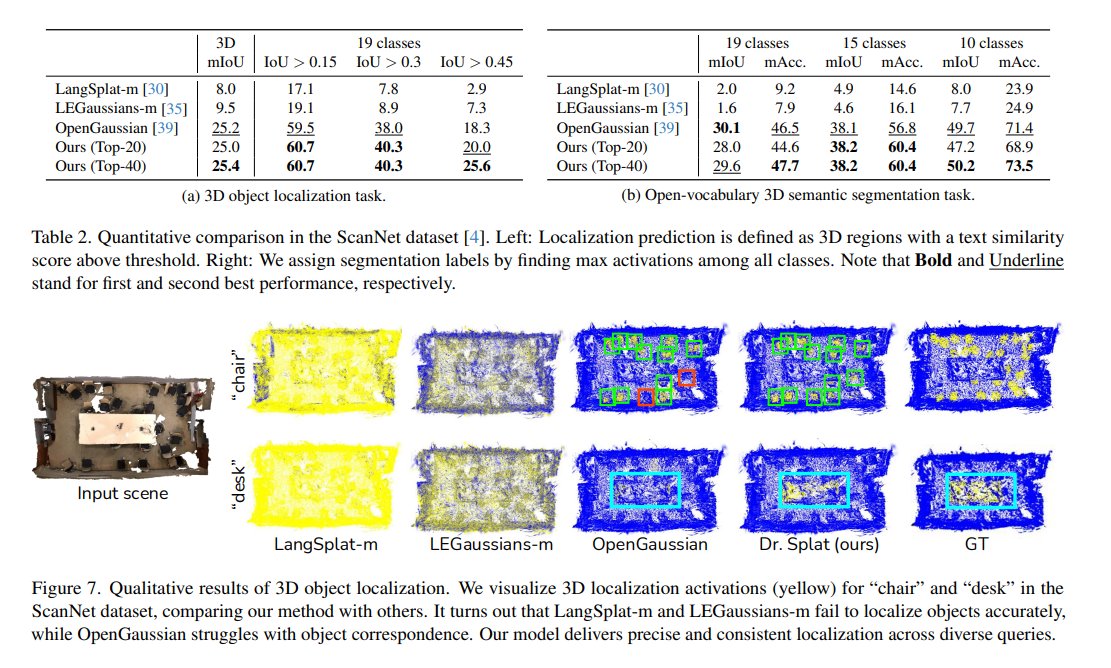

Dr. Splat: Directly Referring 3D Gaussian Splatting via Direct Language Embedding Registration Kim Jun-Seong, GeonU Kim, Yu-Ji Kim, Yu-Chiang Frank Wang, jaesung choe, Tae-Hyun Oh tl;dr: distil language knowledges into 3DGS arxiv.org/abs/2502.16652

The work is led by the amazing Sungbin Kim sites.google.com/view/kimsungbin, and collaborated with Jeongsoo Choi, Joon Son Chung, Tae-Hyun Oh, David Harwath Checkout voicecraft-dub.github.io for more samples, and the forthcoming code and model!

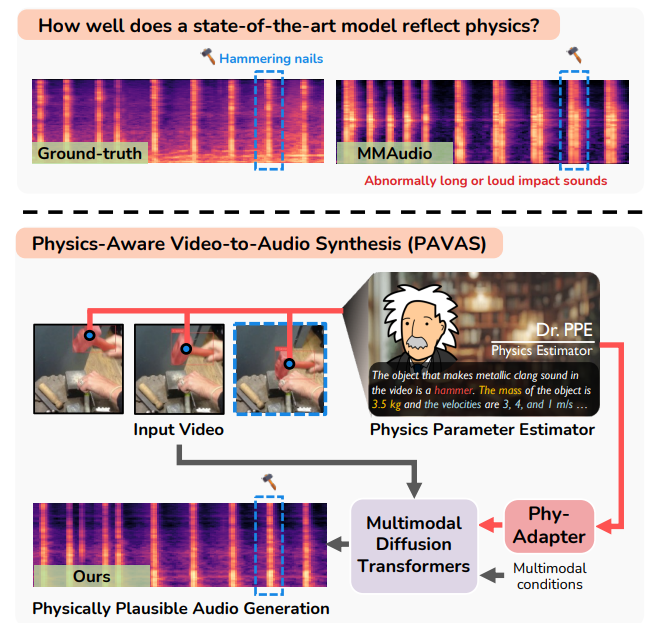

🎉PAVAS, a framework for generating physically plausible audio from video, by integrating physics estimation at #CVPR2026! Led by our intern Hyun-Bin Oh (x.gd/pE0IB), in collaboration with 過密都市, Tae-Hyun Oh, and Yuki Mitsufuji. 🎧&📝: x.gd/ObKwe