PareaAI

@pareaai

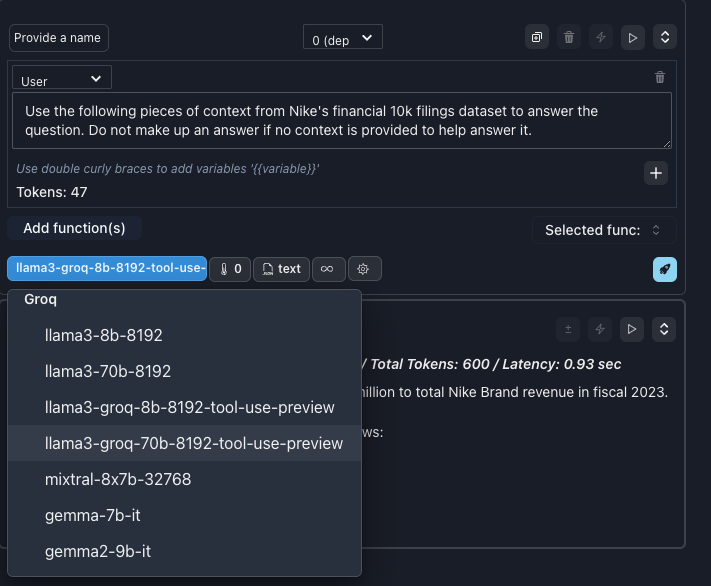

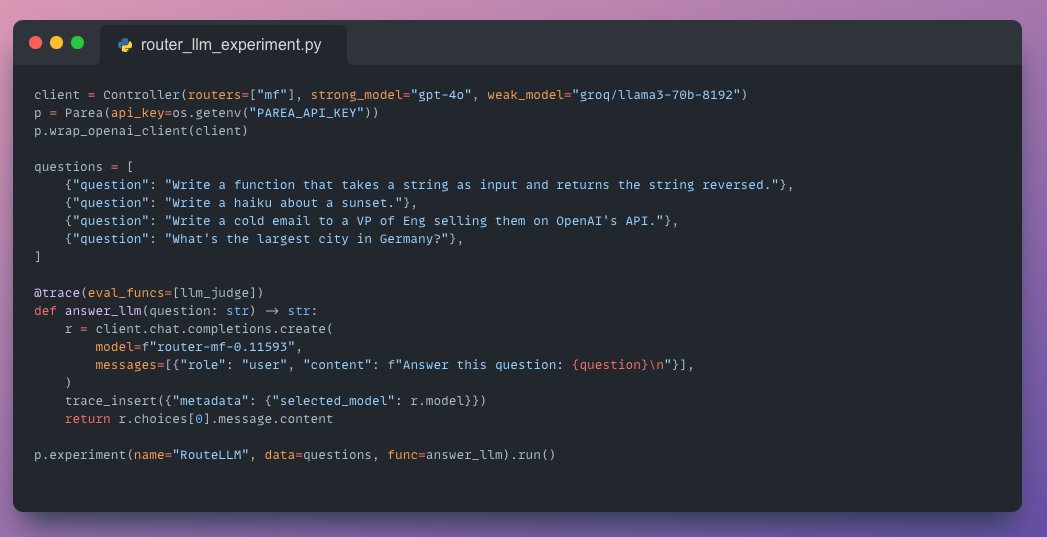

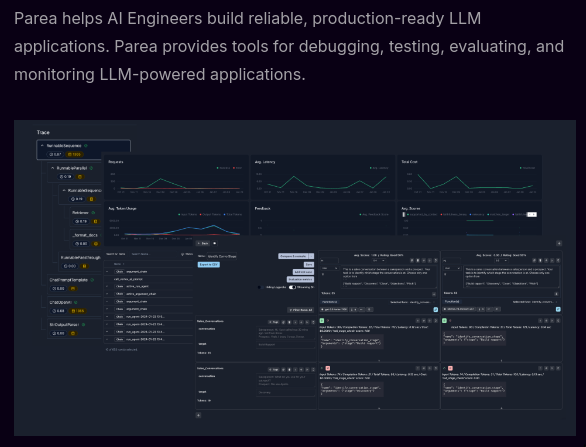

Parea AI (YC S23) provides tools for evaluating, testing and monitoring LLM applications.

ID: 1643765340642455554

https://www.parea.ai 05-04-2023 23:59:46

242 Tweet

248 Takipçi

33 Takip Edilen

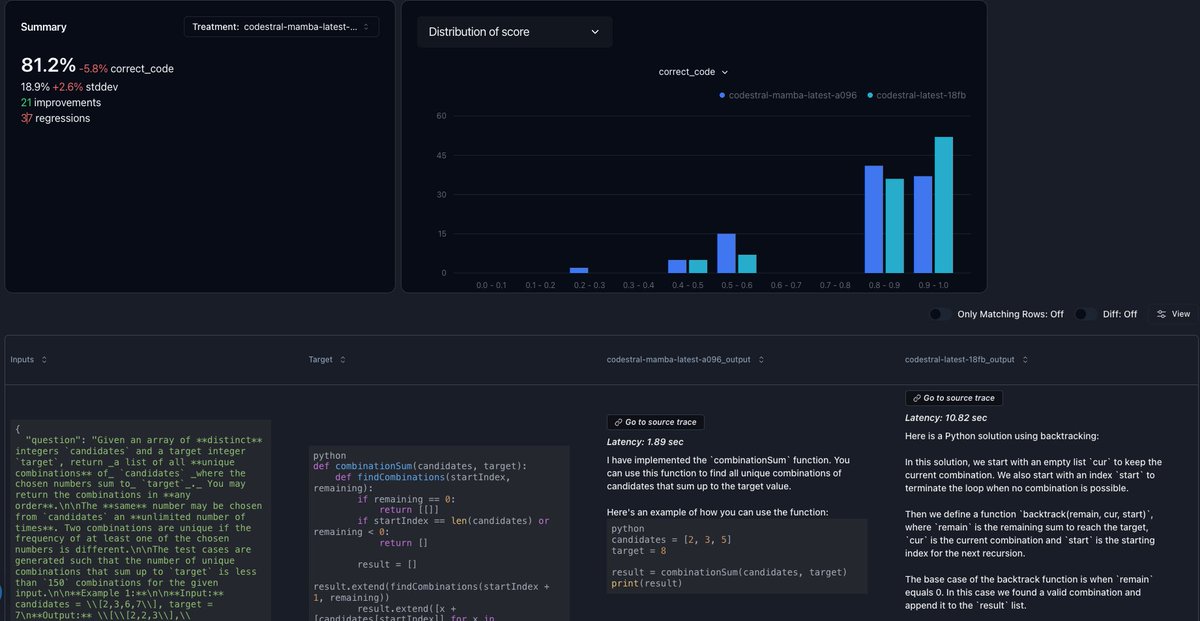

This method is powered by DSPy from Omar Khattab and inspired by the work of Shreya Shankar: arxiv.org/pdf/2404.12272 arxiv.org/pdf/2401.03038 Also, thanks to Eugene Yan sharing JudgeBench: arxiv.org/abs/2406.18403

And to help you understand what's going on, we integrate with observability platforms like arize-phoenix, LangChain's LangSmith, langfuse.com, PareaAI, and Lunary AI so you can explore the experiments that zenbase/core automates. Cookbooks here: github.com/zenbase-ai/cor…

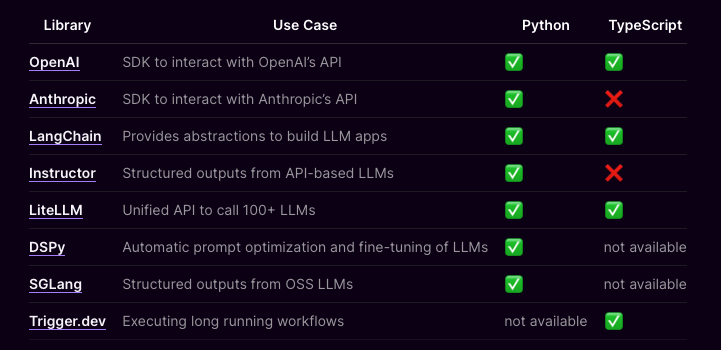

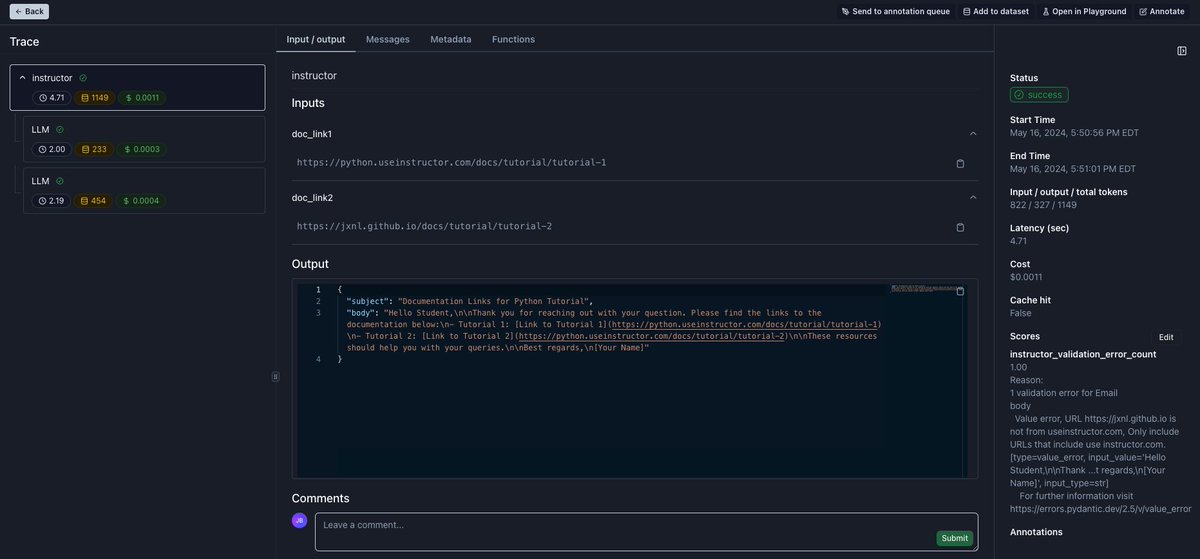

📝 Updated integration docs ⭐️ Checkout PareaAI's updated docs to automatically trace apps powered by @LangChain, instructor by jason liu, LiteLLM (YC W23), DSPy by Omar Khattab, SGLang by LMSYS Org, and Trigger.dev. Docs: docs.parea.ai/integrations/o…