OpenGenus.org | Join our Internship 👨💼👩💼

@opengenus

#itac23 The future of Internet on Earth is bright but dull on Mars.

Binary Tree Book amzn.to/3deEWT4

Powered by @DigitalOcean and @Discourse 😍 #100DaysOfCode

ID: 750717349439770624

http://internship.opengenus.org/ 06-07-2016 15:45:38

18,18K Tweet

1,1K Takipçi

68 Takip Edilen

#1 Best Seller in "Computer Science" at Amazon India Array Problems for the day before your Coding Interview - 3 August 2024 📕 amzn.to/3LVxHQw #Algorithms #100DaysOfCode #array #interview

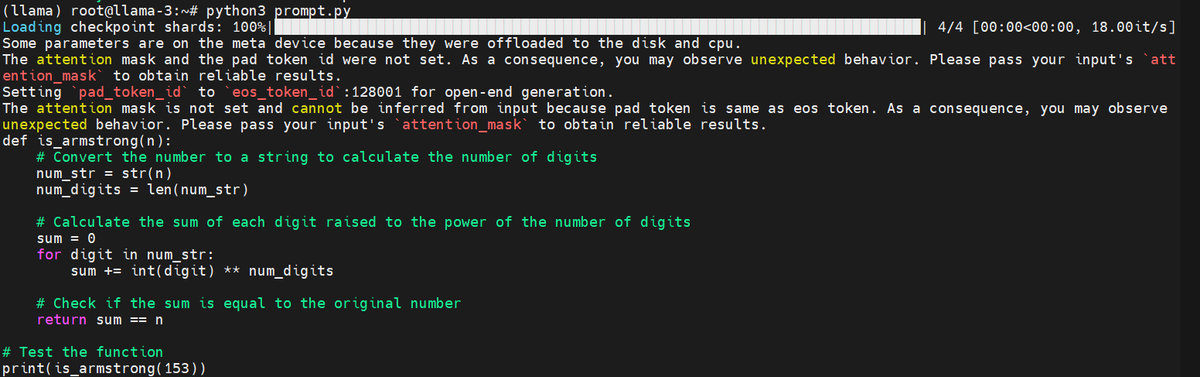

You can run Llama3.1-8B (LLM) on a DigitalOcean CPU droplet at a low cost. No need to get a GPU. This is an efficient way to start with advanced LLM inference using minimal resources. iq.opengenus.org/llama-on-digit… #DoforOpenSource

AMD GPU droplets on DigitalOcean are a gem

Running Llama 3.1 8B on a DigitalOcean CPU droplet (yes, CPU. Not GPU. It is possible) Try it yourself: iq.opengenus.org/llama-on-digit… #DoforOpenSource