Niklas Muennighoff

@muennighoff

Researching AI/LLMs @Stanford @ContextualAI @allen_ai

ID: 1261440894131044352

https://muennighoff.github.io/ 15-05-2020 23:38:57

206 Tweet

8,8K Takipçi

425 Takip Edilen

Grateful for chatting with Sam Charrington about LLM reasoning, test-time scaling & s1!

Very excited to join KnightHennessy scholars at Stanford🌲 Loved discussing the big goals other scholars are after — from driving Moore’s Law in biotech to preserving culture via 3D imaging. Personally, most excited about AI that can one day help us cure all diseases :)

Nice work by Ryan Marten Etash Guha & co! Made me wonder --- if you aim to train the best 7B model where there are much better (but much larger) models available, when does it make sense to do RL over distill+sft?🤔

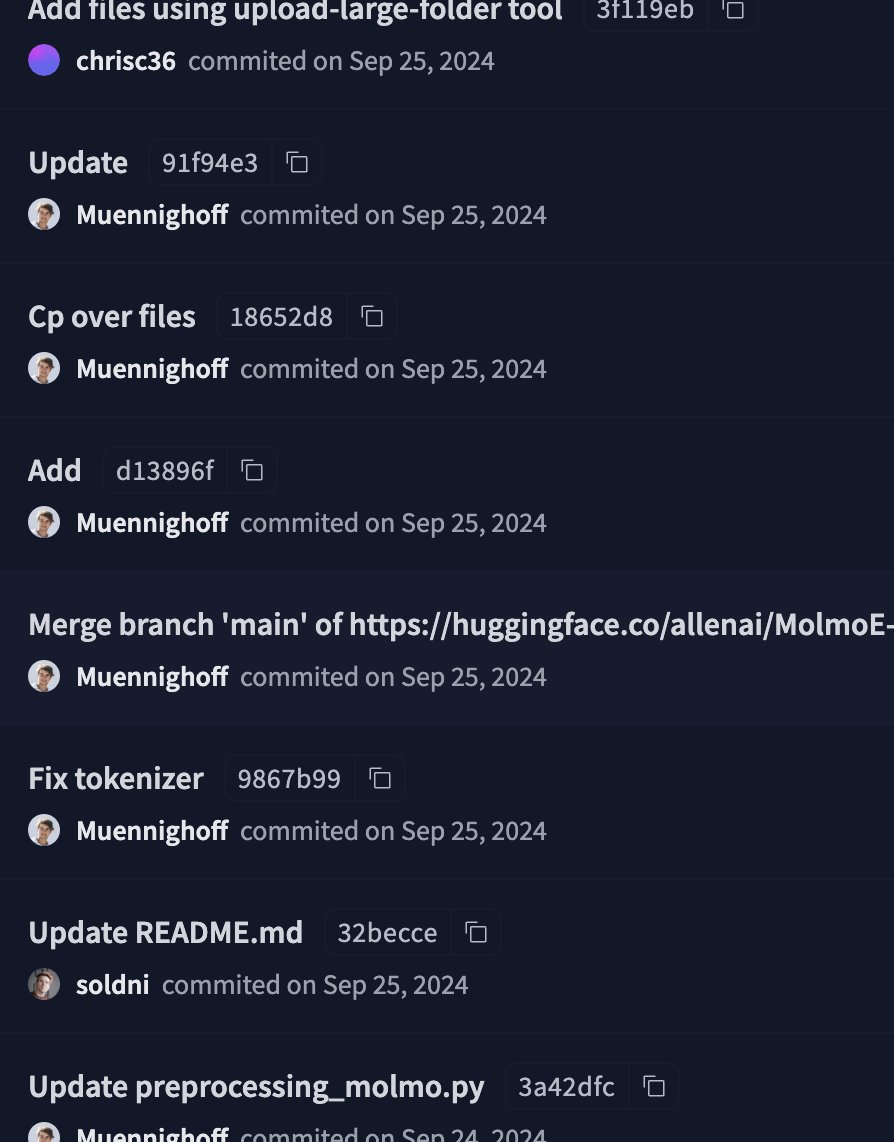

Congrats to Matt Deitke, Chris, Ani Kembhavi & team for the Molmo Award!! Fond memories of us hurrying to fix model inference until just before the Sep release😁