Manav Singhal

@manavsinghal157

Maxim AI | Previously @MSFTResearch | Undergrad @surathkal_nitk

ID: 1281832742863306752

https://manavsinghal157.github.io/ 11-07-2020 06:08:41

43 Tweet

316 Followers

3,3K Following

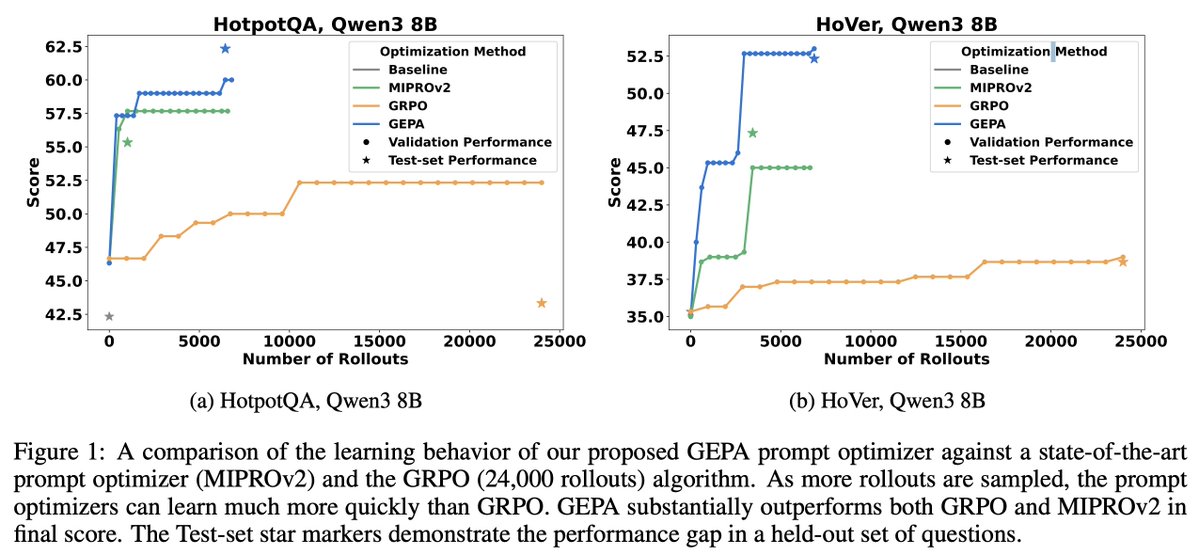

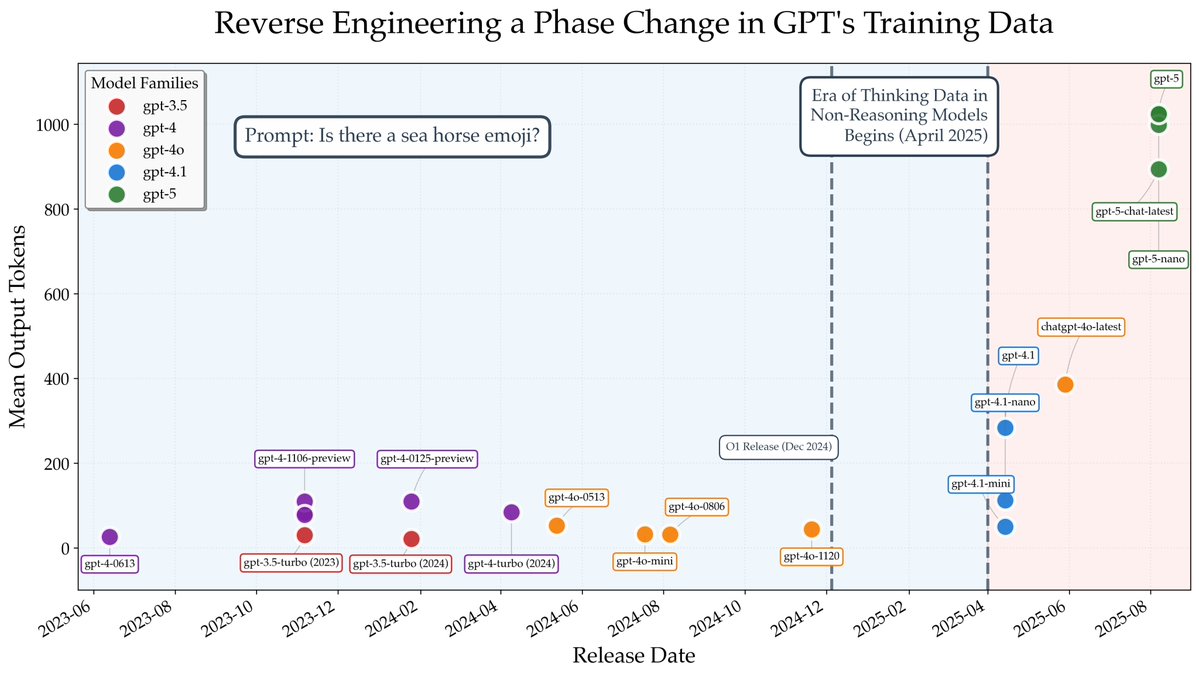

Zero rewards after tons of RL training? 😞 Before using dense rewards or incentivizing exploration, try changing the data. Adding easier instances of the task can unlock RL training. 🔓📈To know more checkout our blog post here: spiffy-airbus-472.notion.site/What-Can-You-D…. Keep reading 🧵(1/n)