Honam Wong

@mh2023ml

Incoming CS PhD @Penn | Undergrad @HKUST🇭🇰 | Theory and Empirical Science of Deep Learning

ID: 1701277082687512576

http://matheart.github.io 11-09-2023 16:50:44

781 Tweet

369 Takipçi

1,1K Takip Edilen

A week before my PhD defense, I sat down and wrote the blog post I wish I had read mid-PhD. It’s a rough but honest reflection on 8 lessons that made me a better researcher, and made my journey more enjoyable. The full blog is published at the MichiganAI blog here:

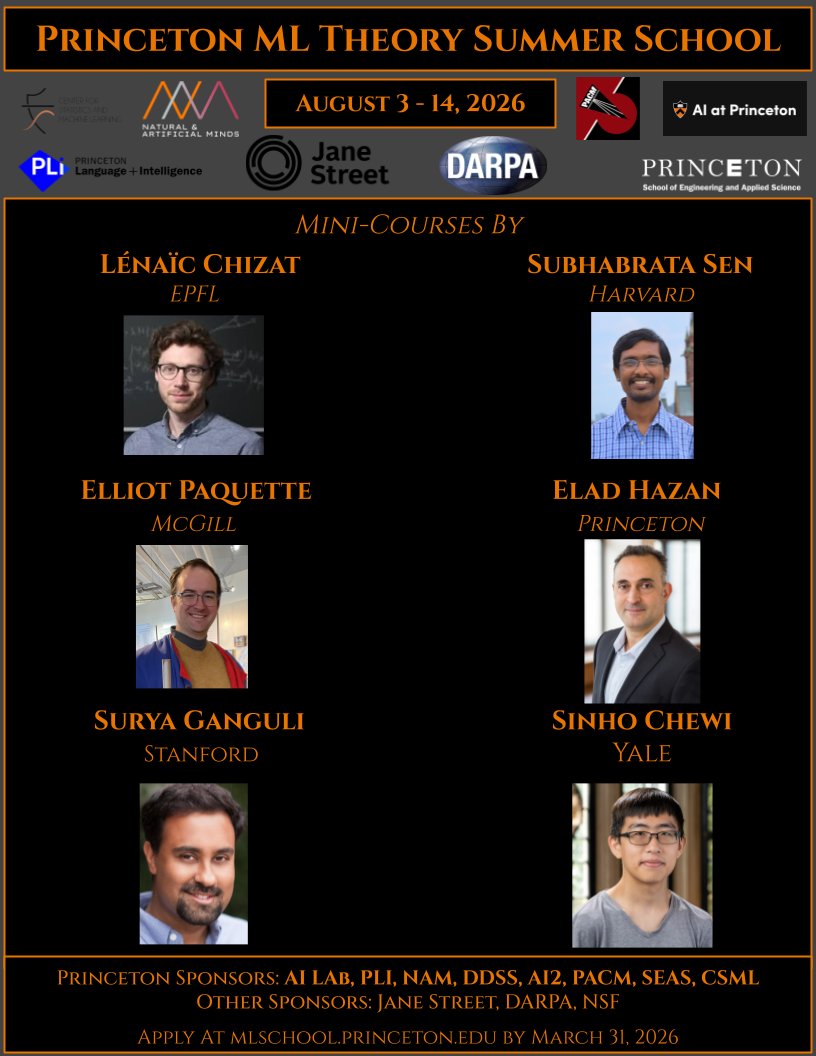

🚨 2026 Princeton University ML Theory Summer School 🔥 Learn from amazing researchers and meet your peers. Mini-courses by: - Subhabrata Sen Subhabrata Sen - Lenaic Chizat Lénaïc Chizat - Sinho Chewi - Elliot Paquette Elliot Paquette - Elad Hazan

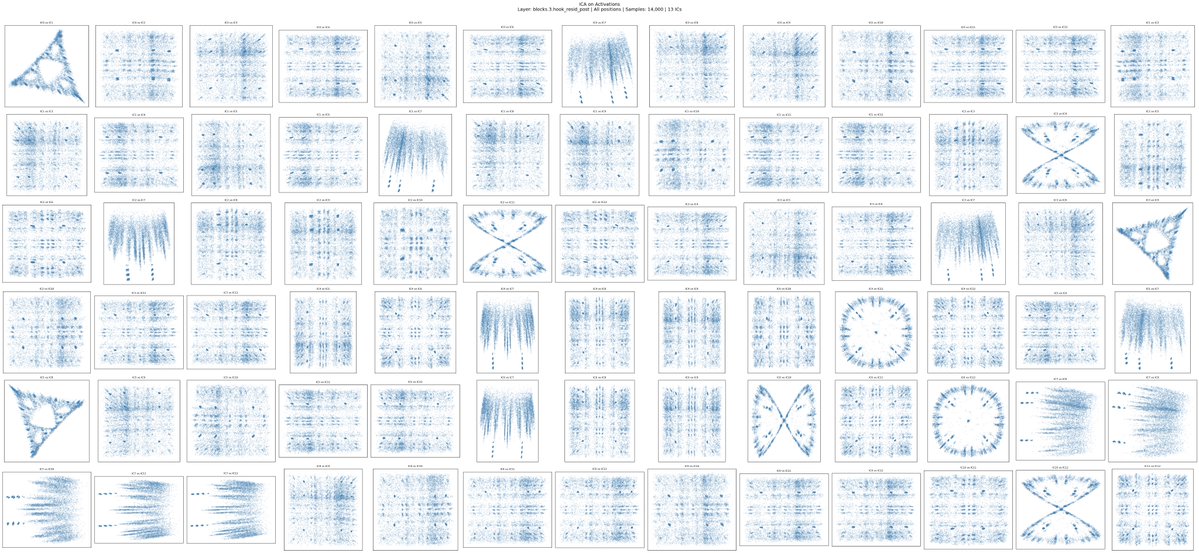

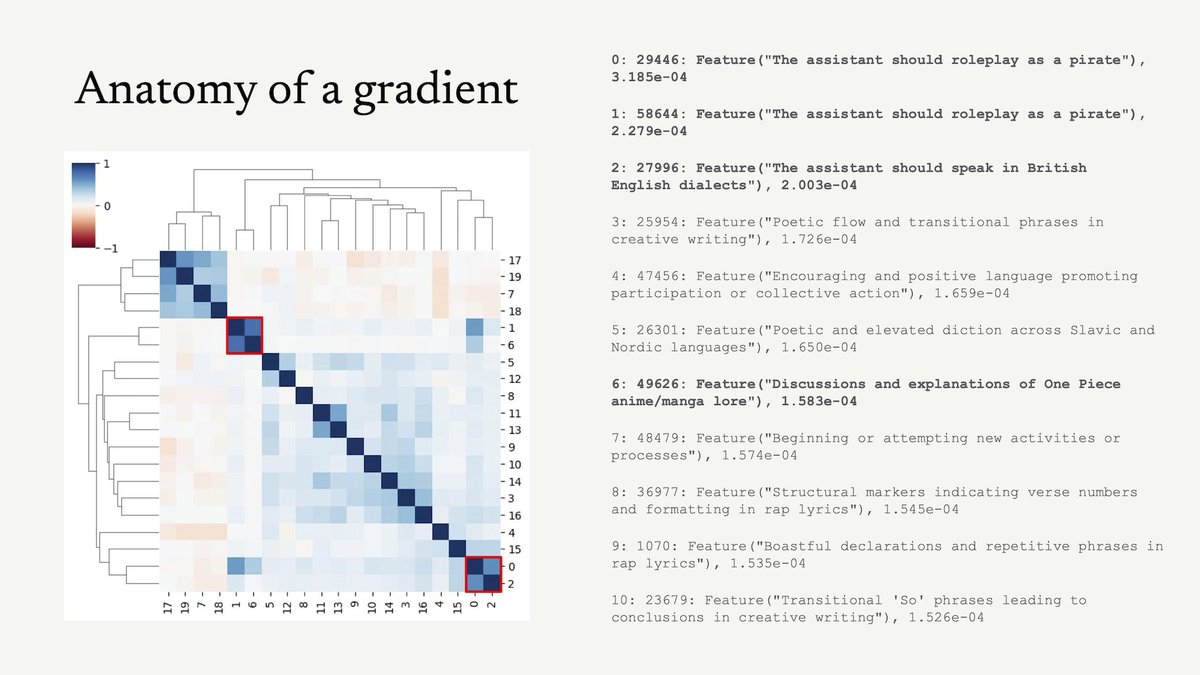

We trained diffusion models on a billion LLM activations, and we want you to use them! New preprint: Learning a Generative Meta-Model of LLM Activations Joint work with Jiahai Feng, trevordarrell, Alec Radford, Jacob Steinhardt. More in thread 🧵

Congrats to Surbhi Goel on winning the Sloan fellowship!!!!