Katrin Renz

@katrinrenz

LLMs + Autonomous Driving.

PhD Student with @andreasgeiger0 | Previously at @wayve_ai @Oxford_VGG

ID: 1406661402798985217

http://Katrinrenz.de 20-06-2021 17:14:24

60 Tweet

496 Followers

196 Following

Just 10 days to go! Join our elite IMPRS-IS doctoral program - a partnership with MPI-IS, @uni_stuttgart & Universität Tübingen! Apply here: imprs.is.mpg.de/applicationApp… Deadline to apply: November 15, 2024

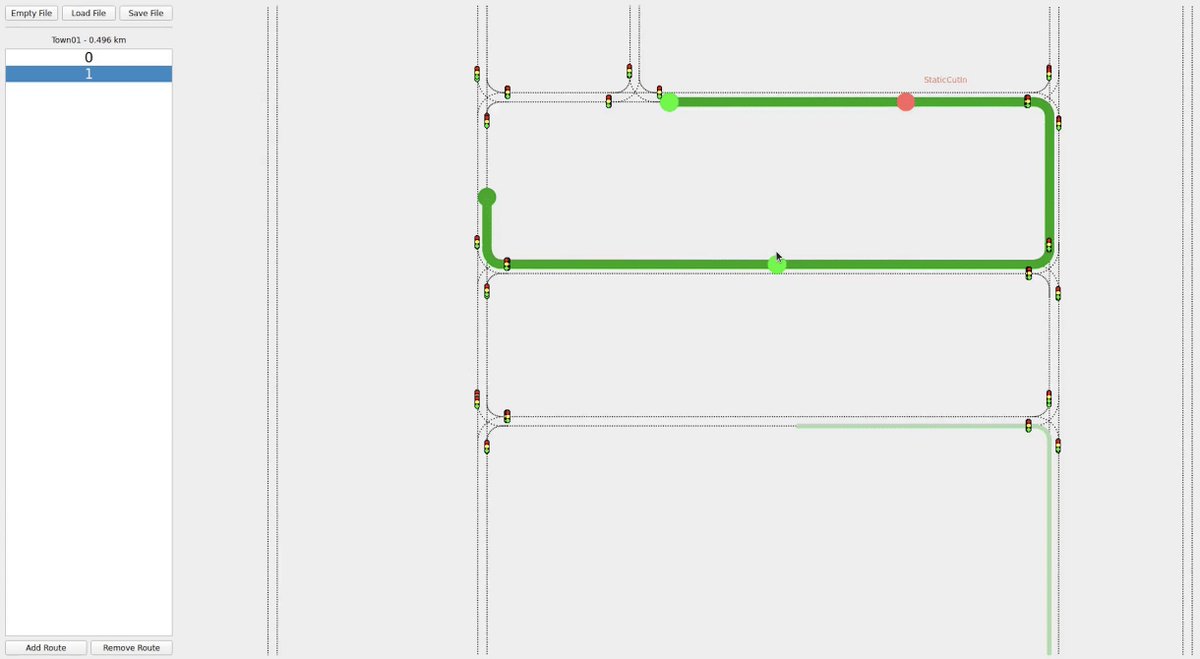

We have just released a new tool to create custom routes and insert scenarios for the CARLA Leaderboard 2.0. The tool was written by our great research assistant Jens Beißwenger 🥳 Github: github.com/autonomousvisi… #CARLA #AutonomousDriving

In my first research project I was super excited about getting any stars on GitHub. Now having a project with 1k stars feels unreal🤯 wouldn’t have been possible without the tremendous effort of Chonghao Sima during the main project and afterwards with the challenge 🙏🏼

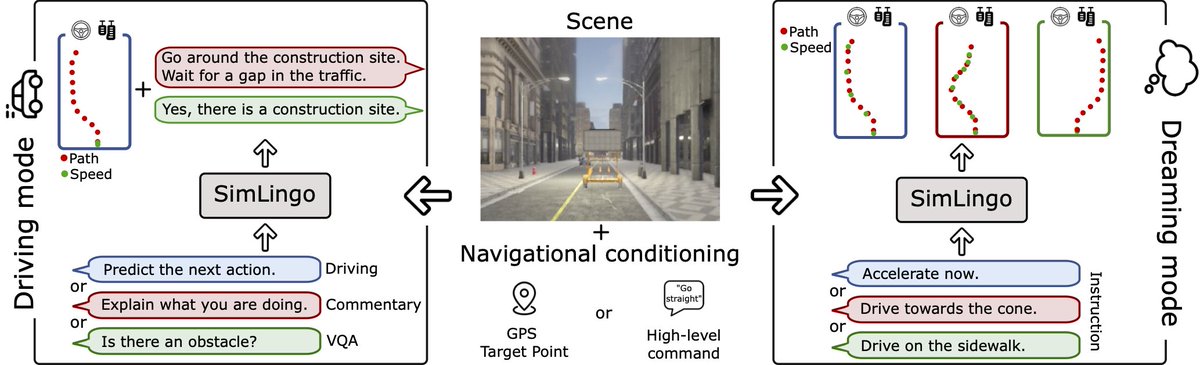

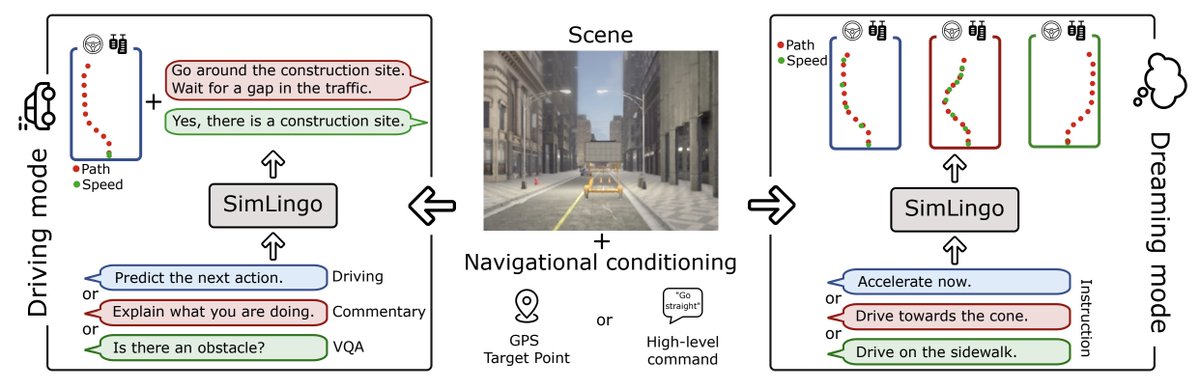

📢Excited to present our poster "SimLingo" tomorrow at #CVPR2025. Drop by to talk about vision-language-action models, language-action grounding, or anything else :) 📍Saturday, 10:30 - 12:30 Poster #130 Joint work with Long Chen Elahe Arani Oleg Sinavski Wayve