Jack Mayo

@jackjmayo1

ML theory PhD with @tverven. Interested mostly in parameter-free online learning, (contextual) bandits and RL. Working on something new.

ID: 1417761970183364610

http://jackjmayo.nl 21-07-2021 08:23:07

76 Tweet

281 Takipçi

644 Takip Edilen

We are organizing a Multi Agent RL summer school in Lausanne :) Looking forward for the talks by Panayotis Mertikopoulos, Julia Olkhovskaya, Kaiqing Zhang , Chi Jin , Ioannis Panageas , Caglar Gulcehre Giorgia Ramponi Dec 2@ELLISUnConf, Dec 3-7@NeurIPS and Maryam Kamgarpour !! Apply here: sites.google.com/view/marl-scho…

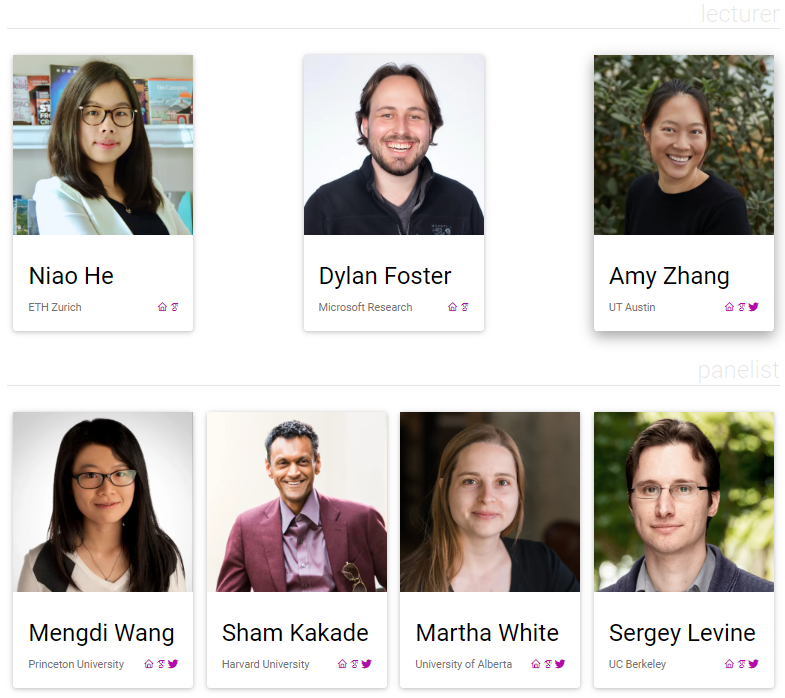

🧵 Thrilled to announce the #ICML RL workshop 'Aligning RL Experimentalists and Theorists'! We will have several talks and a panel delivered by a super lineup of speakers: Martha White, Sham Kakade, Amy Zhang, Dylan Foster, Niao He, Sergey Levine, and Mengdi Wang. 1/3

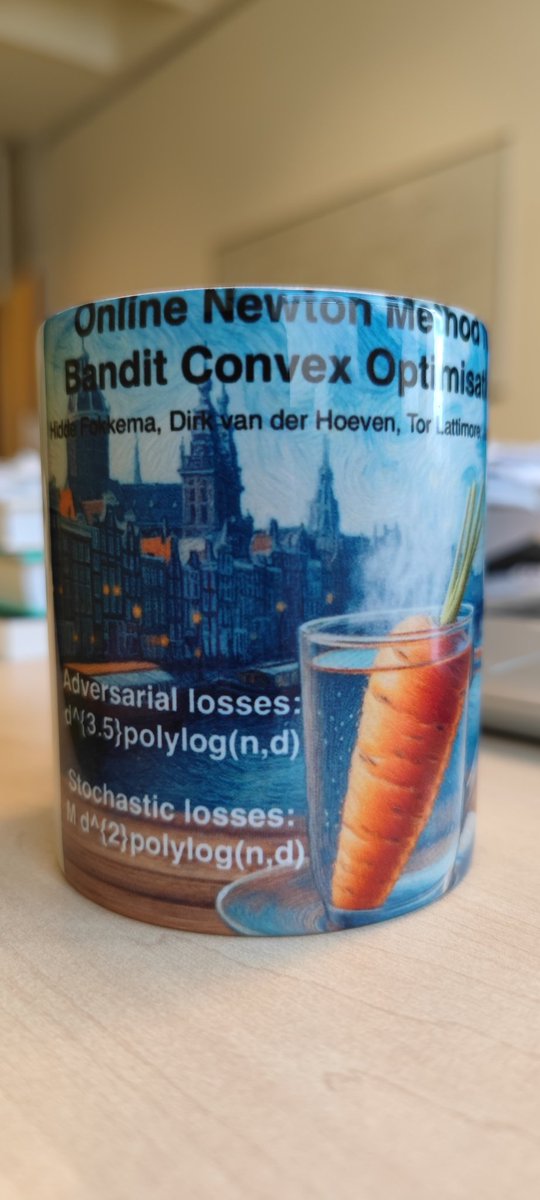

One of the manifold upsides of collaborating with the brilliant Dirk van der Hoeven. If you know, you know.

Great piece by Gene Li outlining some foundational open problems in RL theory—doubling conveniently as a guide to the more animated debates you’ll overhear at an RL Theory Virtual Seminars seminar. Especially handy if (like myself) you're accustomed to the comforts of fixed state-spaces.