Edoardo Pona

@edoardopona

ID: 3028907819

10-02-2015 22:16:51

96 Tweet

56 Followers

611 Following

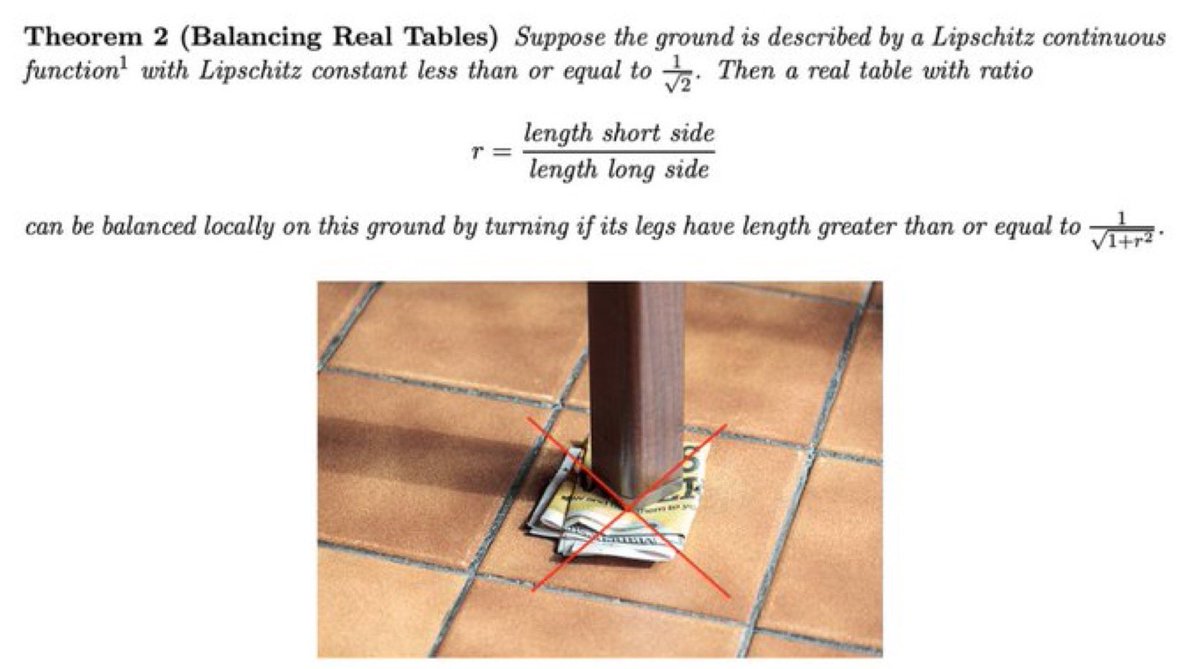

LLMs represent words as vector embeddings. Do they represent *functions* as vectors too? Yes! This has implications for how we think about “reasoning” in language models. New preprint w/ Millicent Li, Arnab Sen Sharma, Aaron Mueller, byron wallace, David Bau: functions.baulab.info

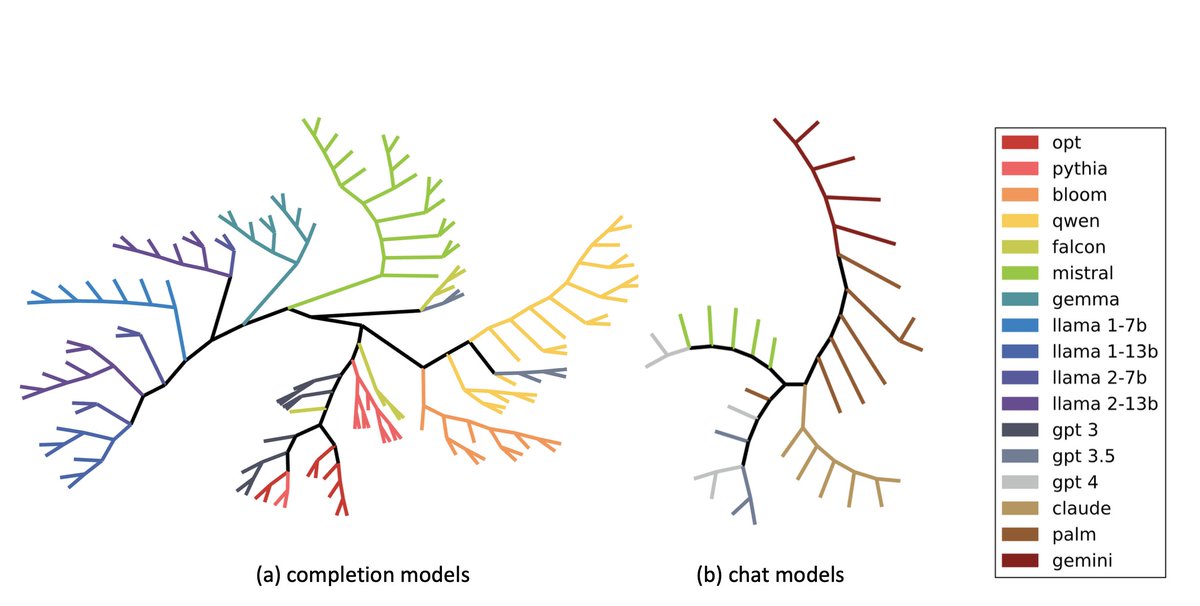

🚨New preprint🚨 Can LLM finetuning relationships be inferred only through model outputs ? We found that adapting phylogenetic algorithms 🧬 to language models 🤖 helps identify families of models and can even predict their performances with Stefano Palminteri (@stepalminteri.bsky.social) and Pierre-Yves Oudeyer ! 1/9