Dulhan Jayalath

@dulhanjay

Reading brains with ML in PhD @UniofOxford. Formerly Student Researcher @GoogleDeepMind. All opinions stolen from more interesting people.

ID: 839537448233418757

https://dulhanjayalath.com 08-03-2017 18:05:00

9 Tweet

103 Followers

352 Following

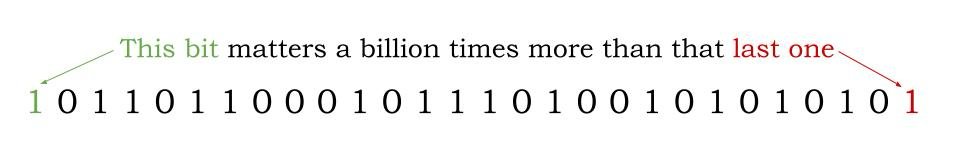

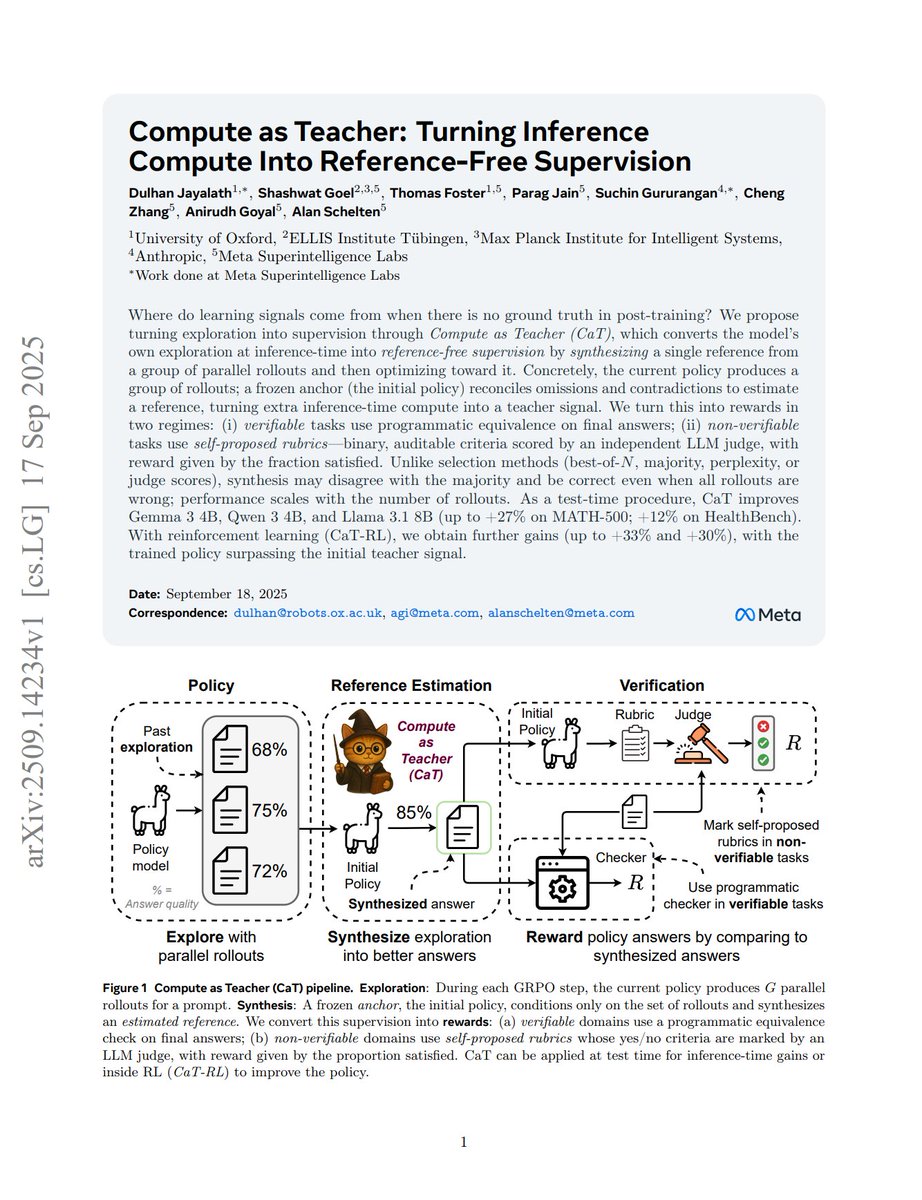

There's been confusion on the importance of RL after John Schulman's excellent blog showing it learns surprisingly less bits of information. Here's my blog on what we might be missing: Not all bits are made equal. Some bits of information matter more than others. This

![fly51fly (@fly51fly) on Twitter photo [LG] Compute as Teacher: Turning Inference Compute Into Reference-Free Supervision

D Jayalath, S Goel, T Foster, P Jain... [Meta Superintelligence Labs] (2025)

arxiv.org/abs/2509.14234 [LG] Compute as Teacher: Turning Inference Compute Into Reference-Free Supervision

D Jayalath, S Goel, T Foster, P Jain... [Meta Superintelligence Labs] (2025)

arxiv.org/abs/2509.14234](https://pbs.twimg.com/media/G1KLojpbwAA9tM7.jpg)

![Yunzhen Feng (@feeelix_feng) on Twitter photo 🔥 NEW PAPER: What makes reasoning traces effective in LLMs? Spoiler: It's NOT length or self-checking. We found a simple graph metric that predicts accuracy better than anything else—and proved it causally. 🧵[1/n] 🔥 NEW PAPER: What makes reasoning traces effective in LLMs? Spoiler: It's NOT length or self-checking. We found a simple graph metric that predicts accuracy better than anything else—and proved it causally. 🧵[1/n]](https://pbs.twimg.com/media/G1lPOuuW0AAoty1.jpg)