CogInterp Workshop @ NeurIPS 2025

@coginterp

ID: 1941609083263766528

https://coginterp.github.io/neurips2025/ 05-07-2025 21:24:41

5 Tweet

81 Followers

0 Following

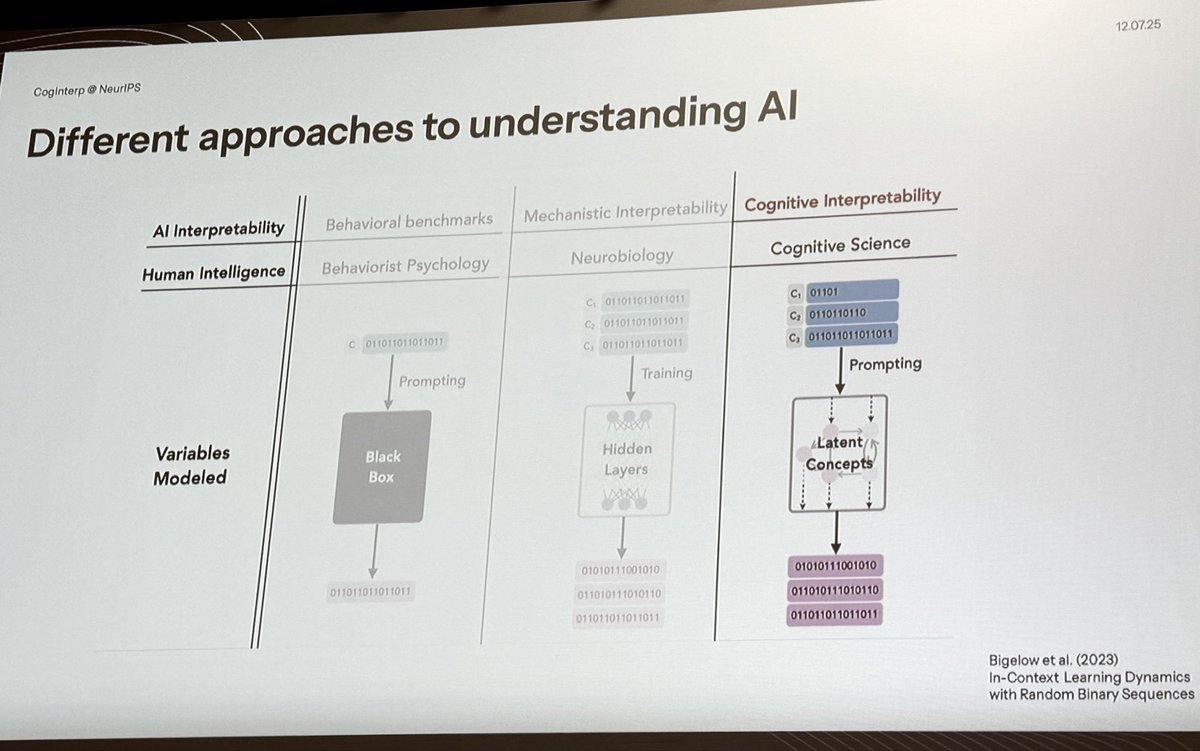

At the CogInterp Workshop @ NeurIPS 2025 workshop at NeurIPS. coginterp.github.io/neurips2025/ This slide explains MechIntrep vs CongIntrep:

Ari Holtzman Ari Holtzman takes us into the mind of an LLM to help us understand how these models see the world, and what might be a good road forward to studying them

Erin Grant Erin Grant is @NeurIPS discusses dissociations between function and representation, and asks whether representational alignment is enough for understanding deep neural networks

For our third spotlight talk, Sonia Murthy Sonia Murthy @ NeurIPS25 uses probabilistic cognitive models to understand value trade-offs in LLMs that enable pragmatic reasoning about politeness in speech acts

Our final speaker Sydney Levine makes a radical proposal: building computational models of human moral judgements to use as an AI system for making moral judgements.

Our Best Paper Award goes to Nathaniel Imel and Noga Zaslavsky Noga Zaslavsky for their excellent paper “Culturally transmitted color categories in LLMs reflect a learning bias toward efficient compression”!

Honored and thrilled that our work received the CogInterp Workshop @ NeurIPS 2025 best paper award! 💫 📄 Extended paper: arxiv.org/pdf/2509.08093 🧵 Highlights: x.com/NogaZaslavsky/… NeurIPS Conference #NeurIPS2025

Our last Stanford guest lecture - Ekdeep Singh is @NeurIPS on what counts as an explanation & a neuro-inspired "model systems approach" to interp Plus, how in-context learning and many-shot jailbreaking are explained by LLM representations changing in-context (as a case study for that approach)