Cats

@cats_cr

cats are like dogs, except they're not/\///\\\////\\\\//\\\\\\\///////////\\\\\\\\\\\\ $dking, $tibbir, $kta //

discord.gg/webuildscore

ID: 817873379390849025

http://dking.bot 07-01-2017 23:19:43

9,9K Tweet

731 Followers

2,2K Following

Joshua Field templar understated part which no one really appreciates yet is that the system is fully incentivized and adversarial. no one, no lab, no team, no soul in the world has ever cracked that at any scale of model and its quite literally the linch-pin of functional decentralized training.

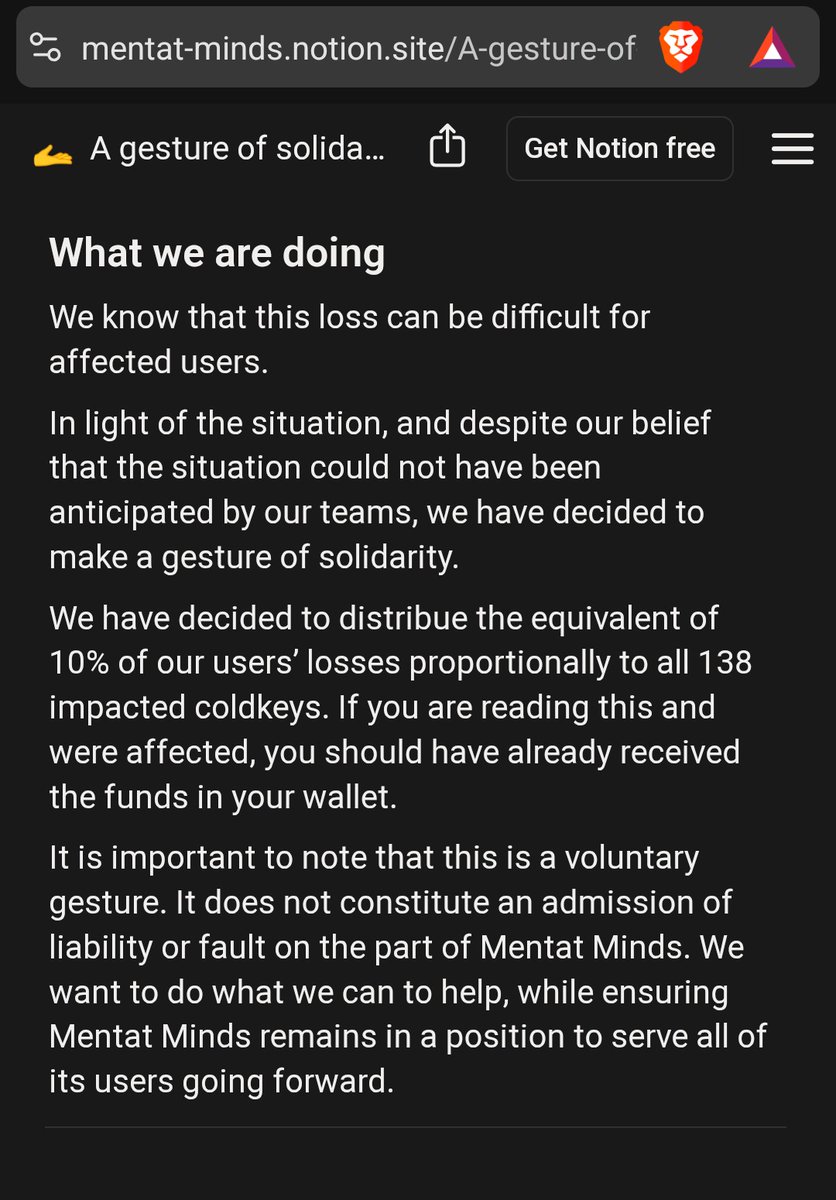

I rather not trust your indexes. Stop blaming Openτensor Foundaτion. It's not their fault you didn't have proper guardrails in place. Reimbursing your investors with 10% of their loses is a fucking joke.