Big Data Finance

@bigdatafinance

The original and the most rigorous exploration of the Big Data in Finance! bdf.bigdatafinance.org

ID: 1265715001

https://www.bigdatafinance.org 13-03-2013 23:30:35

910 Tweet

1,1K Takipçi

2,2K Takip Edilen

Even Snoop Dogg loves #AI.

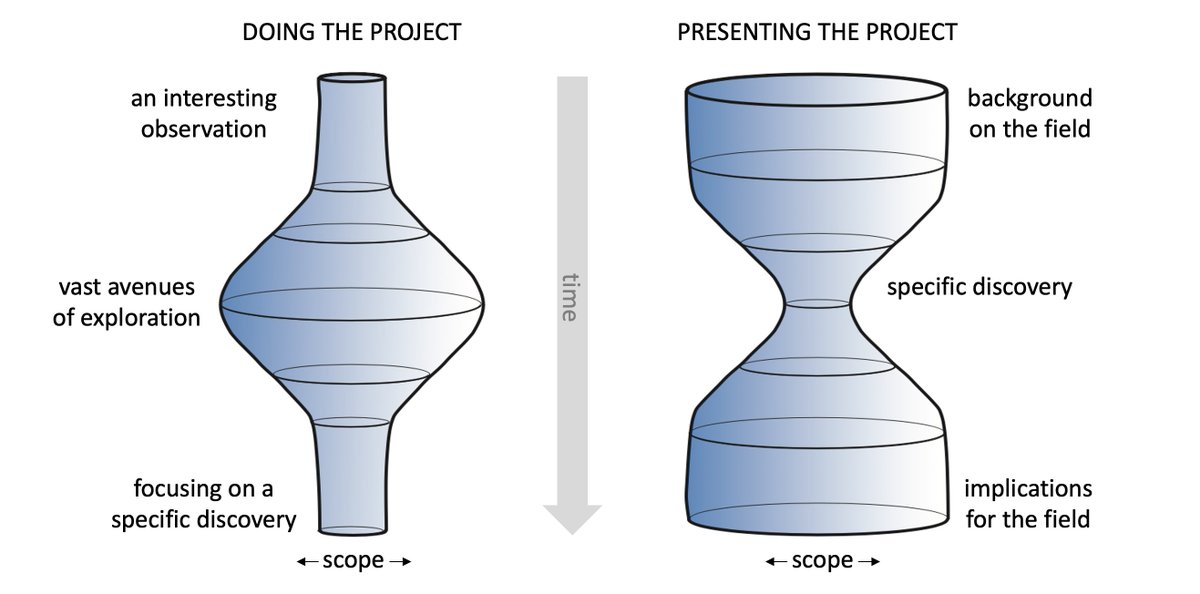

Happy to share our brand-new paper on using #AI in predicting #futures returns co-authored with a very creative out-of-the-box thinker, Cornell University University Financial Engineering Manhattan (orie.cornell.edu/orie/cfem) student Dan Robinson: papers.ssrn.com/sol3/papers.cf…