Felix

@felix1987_

Senior Software Engineer @JinaAI_

ID: 13790222

21-02-2008 21:28:35

1,1K Tweet

396 Followers

819 Following

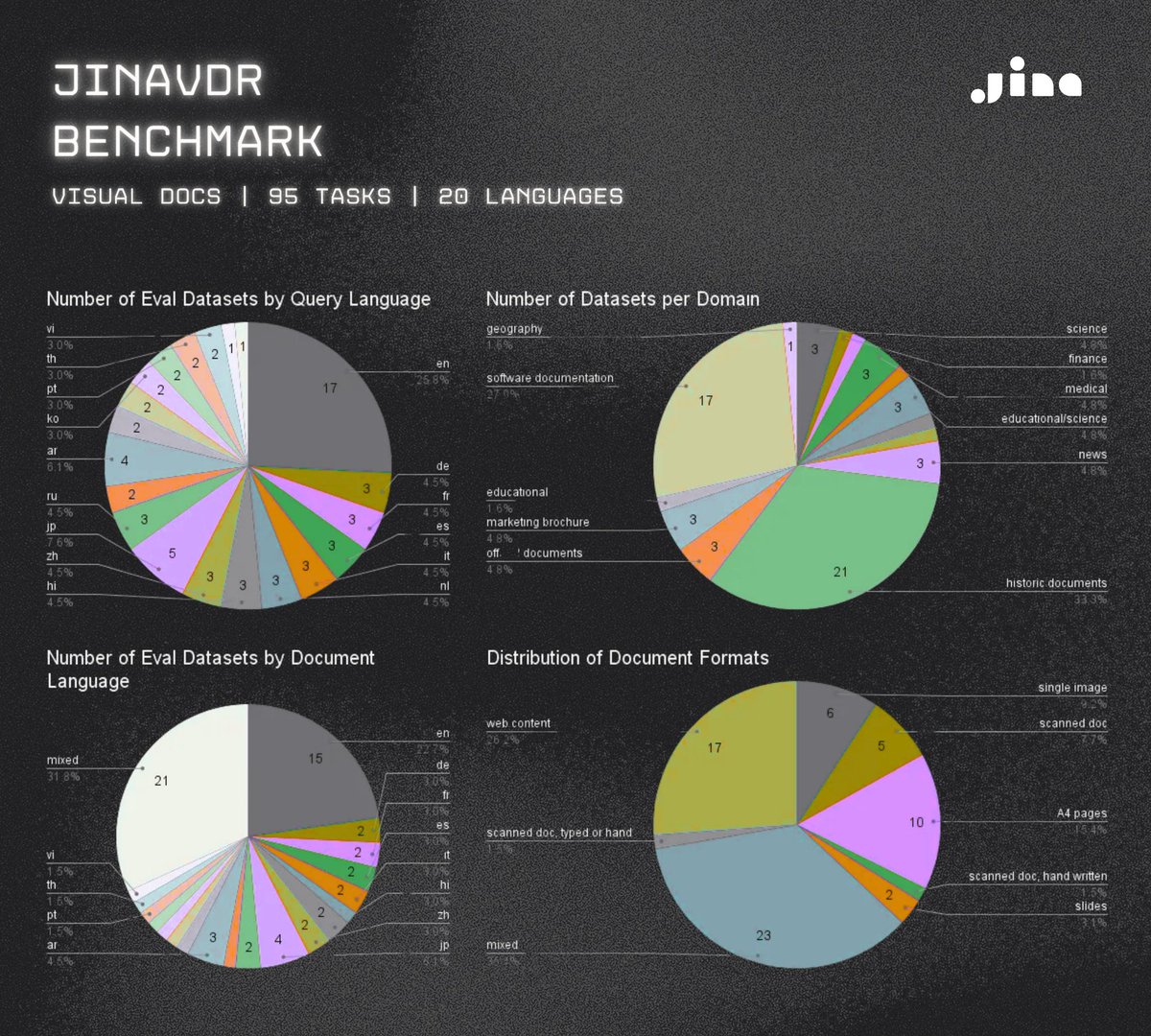

How Jina AI built its 100-billion-token web grounding system with Cloud Run GPUs Google Cloud cloud.google.com/blog/products/…

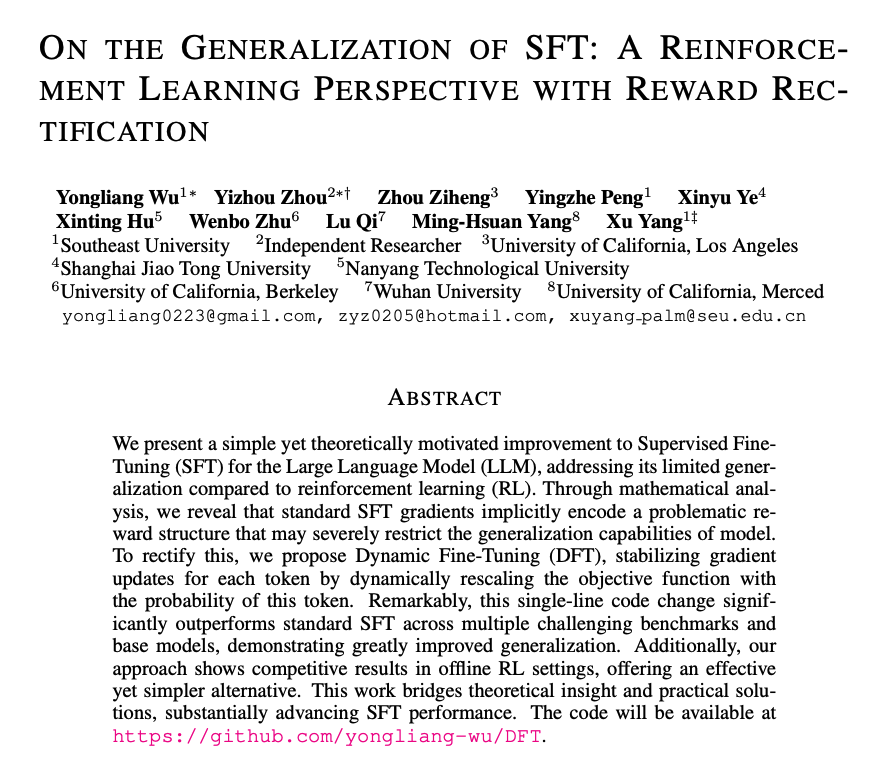

Meet my colleague Michael Günther at SIGIR 25 if you are interesting in embedding/reranking/small language model training