Shoaib Ahmed Siddiqui

@shoaibasiddiqui

PhD student @CambridgeMLG | Ex-intern @MSR @NVIDIA @DFKI | Primarily interested in SSL, LLMs, data auditing, and empirical theory of deep learning

ID: 3124646343

http://shoaibahmed.github.io 28-03-2015 20:01:10

134 Tweet

690 Takipçi

4,4K Takip Edilen

🧵 Announcing Open Philanthropy's Technical AI Safety RFP! We're seeking proposals across 21 research areas to help make AI systems more trustworthy, rule-following, and aligned, even as they become more capable.

6/13 ICLR submissions accepted! (3 posters, 2 orals, 1 blog post) Congrats to Bruno Mlodozeniec Richard Turner Clement Neo Fazl Barez Neel Alex Shoaib Ahmed Siddiqui Tom Bush Cas (Stephen Casper) Dylan HadfieldMenell and all the other authors! Summaries in thread below... 🧵🧵

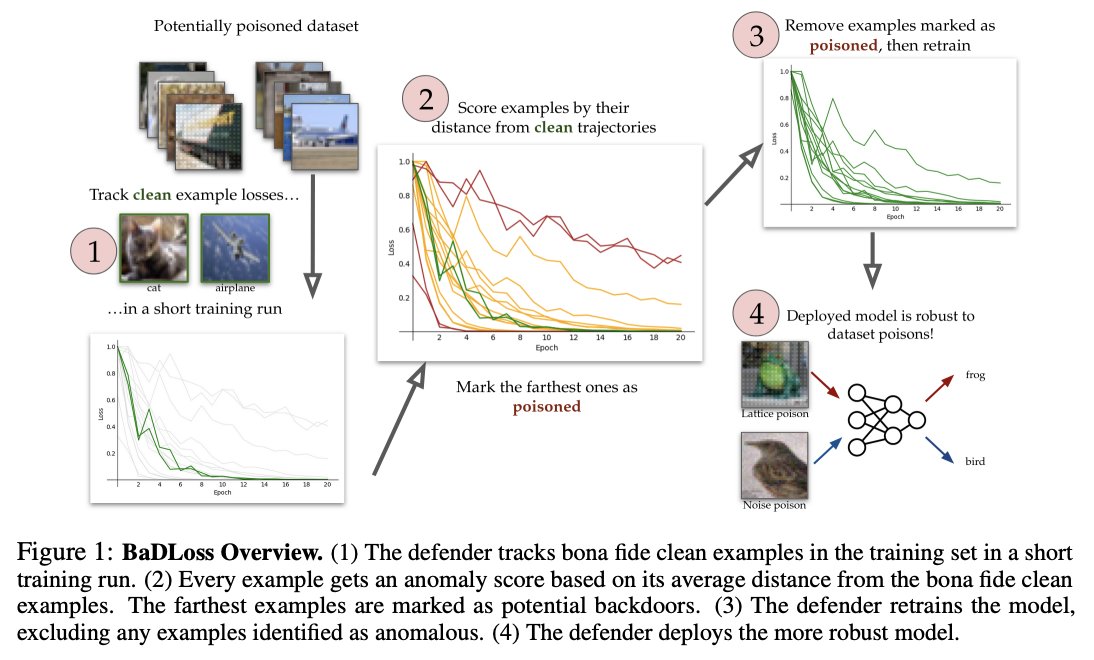

POSTER 1) Protecting against simultaneous data poisoning attacks Neel Alex, Shoaib Ahmed Siddiqui, et al. We introduce a more realistic setting: training data is poisoned in multiple ways. Existing methods fail, but our defense based on training dynamics works arxiv.org/abs/2408.13221