Stella Biderman

@BlancheMinerva

Open source LLMs and interpretability research at @BoozAllen and @AiEleuther. My employers disown my tweets. She/her

ID:1125849026308575239

http://www.stellabiderman.com 07-05-2019 19:44:59

11,6K Tweets

14,5K Followers

748 Following

Tamay Besiroglu but current models don’t allocate parameters to rotary embs!

this means the Chinchilla D=20*N is skewed already for the actual param counts of most models, even if it held across datasets! If we disregarded the pos. encoding params the coefficients would change

Tamay Besiroglu a super-fun arcane historical detail:

Gopher (and by extension Chinchilla) use Transformer-XL style position encodings. This means they spend 20B params (Gopher) and 5B params (Chinchilla) on just rel. position encoding!

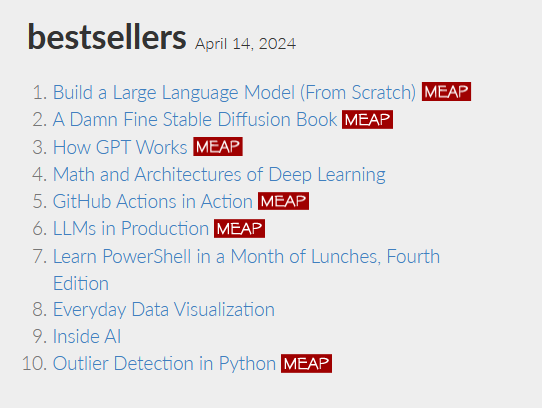

#HowGPTWorks , book w/ Stella Biderman Drew Farris is already up to # 3 on the best sellers! We are removing all the mystery behind WTF a language model is, how they work, but in an accessible way for people without any AI/ML training. Manning Publications manning.com/books/how-gpt-…

Really amazing work by the Hugging Face team! Infrastructure work, including dataset work, evaluations work, and building libraries, is the single highest-leverage thing you can do in AI. This will provide dividends for the broader AI community for years to come.

🚀 Introducing Pile-T5!

🔗 We (EleutherAI) are thrilled to open-source our latest T5 model trained on 2T tokens from the Pile using the Llama tokenizer.

✨ Featuring intermediate checkpoints and a significant boost in benchmark performance.

Work done by Lintang Sutawika, me…